Public Data vs Personal Data: What Scraping Teams Need to Understand

If you work with web data for long enough, this question always comes up at some point: What exactly are we allowed to collect?

It sounds like something that should be straightforward. Data is either public or personal, and once you’ve worked out which is which, everything else should fall into place. That’s the assumption most teams start with, especially when they’re building their first scraping pipelines.

In reality, it’s rarely that clear-cut. Most teams aren’t trying to push boundaries or operate in gray areas. They’re collecting publicly available information to power products, improve decision-making, or understand what’s happening in a market. The challenge is that once you start collecting data at scale, the line between different types of data can blur in ways that aren’t always obvious at first.

That’s where things start to get more interesting, and sometimes a bit more complicated.

Scrape Smarter, Not More

Focus on accuracy and consistency with proxy infrastructure designed for modern data pipelines.

What People Actually Mean by Public Data

When people talk about public data, they’re usually referring to information that’s freely accessible on the open web.

That includes things like product listings, pricing, availability, company information, and search results. If someone can visit a page without logging in or accessing restricted systems, and the information is visible to anyone, it’s generally considered public.

From a scraping perspective, this is where most pipelines begin. Collecting public data is what makes it possible to build pricing intelligence tools, travel aggregators, market research platforms, and all sorts of other applications that depend on having a clear view of what’s happening across the web.

Technically, this type of data is relatively easy to access compared to anything behind authentication layers or private systems.

Where it becomes more complex is how that data is interpreted, structured, and used once it’s collected.

Why “Public” Doesn’t Mean “Anything Goes”

One of the biggest misconceptions is that if data is public, it comes with no constraints.

That assumption tends to hold up at a very surface level, but it starts to fall apart once you look at how data behaves when it’s collected at scale and combined with other sources.

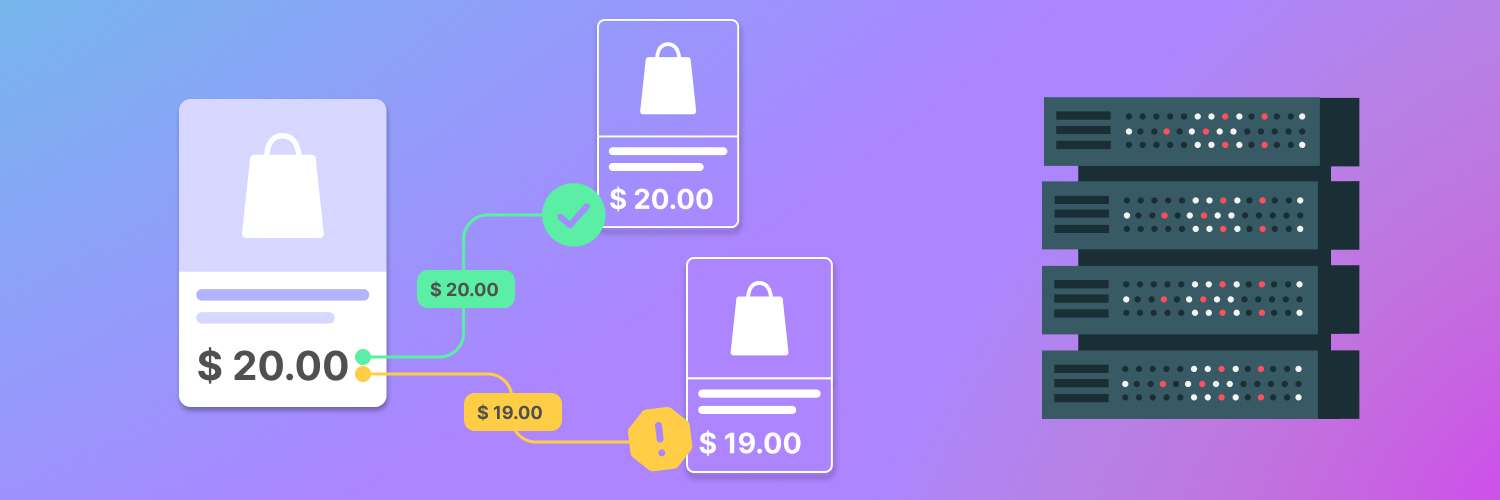

Take something like retail pricing. Product prices, availability, and descriptions are all publicly visible, and collecting that information is a standard use case. The data is tied to products and markets, not individuals, which makes it relatively straightforward to work with.

Now compare that to information about people that happens to be publicly visible. That could include usernames, profile details, or content created by individuals. Even though it’s accessible, it carries a different level of sensitivity, especially when it’s aggregated, analyzed, or used beyond its original context.

The distinction isn’t always about access, but also context, intent, and how the data is ultimately used.

Understanding Personal Data in Practice

Personal data is any information that can identify or be linked back to an individual.

Some of it’s obvious, like names, email addresses, or phone numbers. Other types are less direct, such as usernames, location signals, or patterns of activity that can be associated with a specific person when combined with other data points.

In scraping workflows, personal data often shows up in ways that weren’t part of the original plan.

You might be collecting product listings and end up capturing seller information alongside them. You might be scraping search results and pull in snippets that reference individuals. You might collect reviews that include user-generated content tied to identifiable profiles.

None of that necessarily means the pipeline is doing anything wrong, but it does mean that the dataset now contains different types of data that need to be handled with more awareness.

Why This Gets Harder at Scale

At a small scale, it’s relatively easy to keep track of what’s being collected. You can inspect outputs, review fields, and adjust things manually if something doesn’t look right. As soon as you scale up, that level of visibility becomes much harder to maintain.

When a pipeline is processing thousands or millions of requests, small inconsistencies become much more significant. A field that occasionally includes personal information can become a consistent part of the dataset if it isn’t filtered or categorized properly.

What makes this tricky is that everything still looks structured. The data arrives, the pipeline runs, and nothing fails in an obvious way. The issue isn’t that the system break, it’s that it starts collecting more than it was originally designed to handle.

That’s why clarity becomes more important as systems grow.

How Data Gets Mixed Without Anyone Noticing

One of the more subtle challenges in scraping is how easily different types of data can end up mixed together.

A single web page often contains multiple layers of information. A product listing might include pricing, availability, seller details, and customer reviews all in one place. A marketplace page might combine product data with seller profiles and user-generated content.

From a technical perspective, it’s all part of the same page, but from a data perspective, it serves very different purposes.

If a pipeline is designed to extract everything without being selective, it will naturally pull in all of those elements. Over time, datasets that were intended to focus on market signals can start to include information that wasn’t part of the original use case.

This usually happens gradually rather than all at once, which is why it’s easy to miss.

Designing Pipelines with Clear Boundaries

One of the most effective ways to avoid these issues is to be deliberate about what the pipeline is designed to collect.

That starts with defining clear boundaries.

Instead of capturing every available field, pipelines can be structured to focus on the data that actually supports the use case. For example, a pricing system might prioritize product identifiers, prices, and availability while excluding user-level information that isn’t needed.

This approach keeps datasets cleaner and easier to work with, and it also reduces the amount of downstream processing required, since the data has already been filtered at the point of collection.

When boundaries are clear from the beginning, the system stays more predictable over time.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

Why Use Case Should Drive Data Collection

The way data is handled should always be tied back to its intended use.

If the goal is to understand market pricing, the focus should remain on product and market-level data. If the goal is to analyze trends, the structure of the dataset should reflect that purpose.

When pipelines grow without a clear connection to how the data will be used, they tend to accumulate unnecessary complexity. Fields get added, datasets expand, and over time it becomes harder to maintain clarity around what’s actually needed.

Keeping the use case front and center makes it easier to make decisions about what belongs in the dataset and what doesn’t.

Why Consistency Matters Over Time

Even when a pipeline is well designed, it doesn’t stay static. New data sources get added, page structures change, and requirements evolve. Over time, datasets can begin to shift in ways that weren’t part of the original plan.

That’s why it’s important to revisit how data is being collected on a regular basis.

Reviewing field definitions, checking for unexpected changes, and validating outputs helps keep everything aligned. Small adjustments along the way are much easier than trying to correct a dataset after it has grown significantly. Consistency is what allows data to remain useful.

Building Systems That Teams Can Rely On

When all of this comes together, the difference is noticeable.

Pipelines that are designed with clear boundaries, aligned to a specific use case, and monitored over time tend to produce data that is easier to trust. The outputs are more consistent, the insights are more reliable, and the system as a whole becomes easier to manage.

On the other hand, pipelines that prioritize volume without the same level of structure often become harder to work with as they grow. The data becomes more difficult to interpret, and small inconsistencies start to create larger issues.

Taking the time to get this right early makes everything that follows much smoother.

Working with Rayobyte

At Rayobyte, we work with teams that rely on publicly available web data to support pricing intelligence, analytics, and large-scale data workflows.

Our focus is on helping teams collect public data in a way that stays consistent and reliable as their systems scale. That includes providing proxy infrastructure that supports stable performance, accurate geolocation, and predictable behavior across different regions.

We also spend time understanding how that data is being used, which makes it easier to support pipelines that are aligned with real use cases rather than just technical requirements.

When data collection is built on a clear foundation, everything that depends on it becomes easier to manage, from analysis to decision-making.

Scrape Smarter, Not More

Focus on accuracy and consistency with proxy infrastructure designed for modern data pipelines.