What Is OpenClaw? How It Works and When to Use It for Web Scraping

If you’ve been exploring web scraping tools recently, there’s a good chance you’ve come across OpenClaw.

It tends to pop up in conversations around open source scraping, especially among teams that want more control over how they collect data without relying entirely on third-party platforms. On the surface, it looks like an appealing option. It’s flexible, it’s transparent, and it gives you the ability to build exactly what you need without being locked into someone else’s system.

That combination is understandably attractive, but at the same time, tools like OpenClaw often get talked about as if they can handle everything, from small experiments to large-scale production pipelines. In practice, the reality is a bit more nuanced. OpenClaw can be incredibly useful in the right context, but like most open source scraping tools, it has limits that become more visible as you start to scale.

To make sense of where it fits, it helps to look at how it actually works, what it’s good at, and where teams tend to run into challenges.

Hitting Limits with OpenClaw?

Stabilize your pipeline with rayobrowse, designed for consistent performance at scale.

What Is OpenClaw?

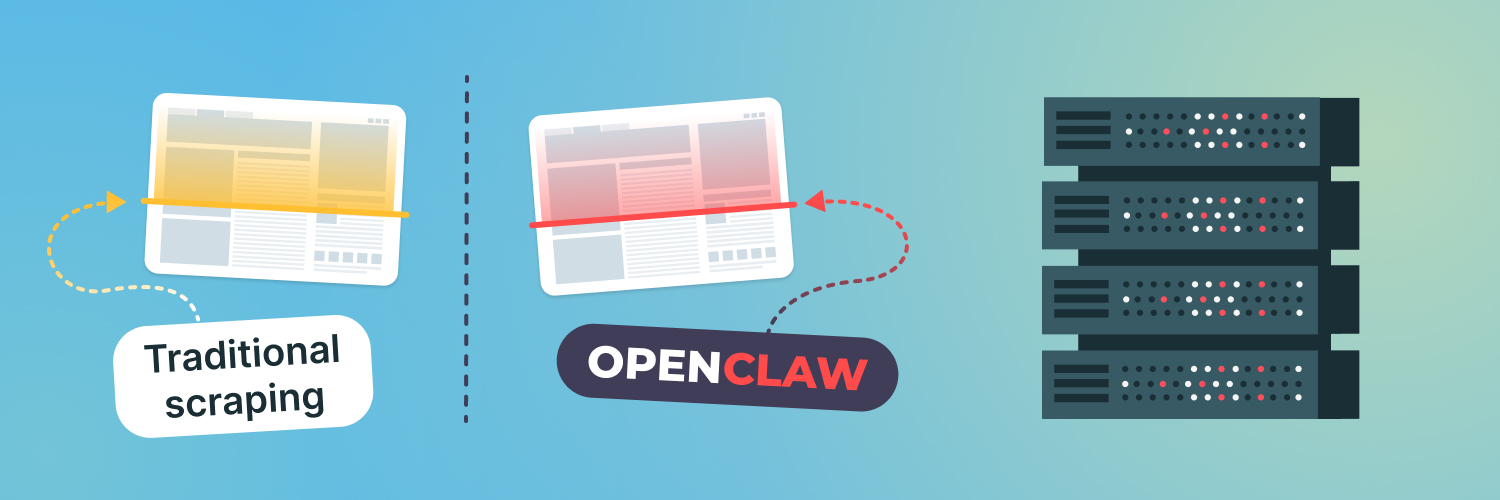

OpenClaw is an open source web scraping tool designed to give developers more control over how they collect and process data from the web.

Instead of providing a fully managed platform, it offers a framework that you can build on top of. That means you’re responsible for defining how requests are made, how data is extracted, and how results are stored. For teams that want flexibility and transparency, that’s often a big advantage.

OpenClaw fits into a broader category of open source scraping tools that prioritize customization over convenience.

Rather than abstracting everything away, it gives you access to the underlying mechanics of scraping, which makes it easier to tailor the tool to specific use cases. Whether you’re experimenting with a new data source or building a custom pipeline, that level of control can be very useful.

How OpenClaw Works in Practice

At its core, OpenClaw operates as a scraping framework rather than a plug-and-play solution.

You define the targets you want to collect data from, configure how requests should be sent, and set up extraction logic to pull out the information you need. That usually involves working directly with HTML structures, selectors, and parsing rules, depending on the type of content you’re dealing with.

From there, you can structure the output in whatever format makes sense for your application, whether that’s feeding into a database, an analytics pipeline, or a downstream system.

This approach gives you heaps of flexibility. If a website changes its structure, you can update your parsing logic. If you need to adjust how requests are handled, you can modify your configuration. For developers who are comfortable working at that level, it provides a lot of control. That said, it also means more responsibility.

Where OpenClaw Works Well

OpenClaw is particularly useful in situations where you want to build something tailored.

If you’re working on a specific project with clearly defined requirements, an open source tool like OpenClaw allows you to shape the pipeline exactly the way you want it. You’re not constrained by the limitations of a managed service, and you can adapt quickly as your needs evolve.

It’s also a good fit for experimentation. When you’re testing a new idea or exploring a new data source, having a flexible tool can make it easier to iterate. You can try different approaches, adjust your logic, and refine your setup without needing to work around predefined constraints.

For teams that value transparency, OpenClaw offers a clear view of how everything works under the hood.

That can be especially helpful when debugging issues or optimizing performance, since you have direct access to the parts of the system that would otherwise be hidden.

Where OpenClaw Starts to Struggle

The challenges tend to appear when you move from controlled environments into larger, more dynamic workloads.

At small to medium scale, managing requests, parsing logic, and data flow is relatively straightforward. As the number of targets increases, or as the frequency of data collection rises, things become more complex.

One of the first areas where this shows up is reliability. Websites change frequently, and scraping pipelines need to adapt to those changes without breaking. With OpenClaw, that responsibility sits entirely with your team. Maintaining parsers, handling edge cases, and keeping everything running smoothly can become a significant ongoing effort.

Traffic management is another factor. As you scale, distributing requests in a way that remains stable and consistent becomes more important. Without the right infrastructure in place, pipelines can become less predictable, which affects both performance and data quality.

Then there’s the question of maintenance. Open source tools give you flexibility, but they also require time and resources to manage. Updates, monitoring, debugging, and scaling all need to be handled internally, which can add up quickly as systems grow.

The Gap Between Tools and Infrastructure

This is where many teams start to feel the gap between having a scraping tool and having a complete scraping system.

OpenClaw provides the tooling layer. It helps you define how to collect and process data. What it doesn’t provide is the infrastructure layer that supports large-scale, reliable data collection.

That includes things like managing request distribution, maintaining consistent geolocation, handling variability across websites, and ensuring that pipelines remain stable over time.

Without that layer, even well-designed scraping logic can struggle to perform consistently at scale. It’s not a limitation of OpenClaw specifically, but a reflection of how most open source scraping tools are designed.

Clean Data. No Guesswork.

Power your scraping workflows with stable, reliable proxy infrastructure.

What Scaling Actually Requires

Scaling a scraping pipeline isn’t just about increasing the number of requests, but also maintaining consistency as the workload grows.

That means making sure that requests are distributed evenly, that data is collected under stable conditions, and that results remain reliable even as websites evolve. It also means having visibility into how the system behaves, so issues can be identified and addressed quickly.

These are the kinds of challenges that don’t always show up in early-stage projects, but they become more visible once the pipeline is running continuously and supporting real-world applications. At that point, the focus shifts from building the scraper to keeping the entire system stable.

Where rayobrowse Fits In

This is where tools like rayobrowse come into the picture.

rayobrowse is designed to complement frameworks like OpenClaw by handling the infrastructure side of web data collection. Instead of replacing your scraping logic, it supports it by providing a more stable and scalable way to access web content.

That includes managing request distribution, maintaining consistent geolocation, and supporting high-volume data collection without introducing unnecessary complexity into your pipeline.

For teams using OpenClaw, this means you can continue to benefit from the flexibility of an open source tool while relying on a dedicated layer to handle the parts that are hardest to scale.

The combination allows you to keep control where it matters, while reducing the overhead that comes with managing everything yourself.

Choosing the Right Approach for Your Use Case

There isn’t a single “best” tool for web scraping.

The right choice depends on what you’re trying to achieve and how your requirements evolve over time.

If you’re working on a focused project, experimenting with new data sources, or building something that requires a high level of customization, OpenClaw can be a strong option. It gives you the flexibility to design your pipeline exactly the way you want it.

As your needs grow, the conversation often shifts. Reliability, scalability, and consistency become more important, and that’s where infrastructure starts to play a bigger role. At that stage, combining tools rather than relying on a single solution tends to produce better results.

Working with Rayobyte

At Rayobyte, we work with teams at every stage of their scraping journey, from early experimentation to large-scale production systems.

We understand the appeal of open source tools like OpenClaw, and we also know where the challenges tend to appear as those systems grow. That’s why we focus on providing the infrastructure that helps teams scale without losing control of their pipelines.

rayobrowse is built to support high-volume, reliable data collection, with consistent performance and accurate geolocation across regions. It’s designed to fit alongside existing tools rather than replace them, which makes it easier to build systems that are both flexible and stable.

If your team is using OpenClaw or exploring open source scraping tools, and you’re starting to think about how to scale more reliably, we’re always happy to help you find the right balance.

Hitting Limits with OpenClaw?

Stabilize your pipeline with rayobrowse, designed for consistent performance at scale.