Web Crawling vs Web Scraping: What’s the Difference?

The explosive growth of the internet in recent years has led to a rapid increase in the number of websites. Wherever there is a website, you’ll find a web page, and where there is a web page, you have data.

This relationship between websites and data is essential, as it helps web crawlers and web scrapers alike. However, a common confusion arises in web crawling vs. web scraping. Sometimes, people may confuse the two, since they sound similar.

Try Our Web Scraping API

The easiest way to get your project started!

Although there are some similarities between web crawling and web scraping, they’re different processes. To understand the similarities and differences between the two, we must first see what each procedure entails and how a web crawler works compared to a web scraper.

In this article, we’ll explore and compare both web scraping and web crawling. By doing so, we hope to clarify any confusion you may have! So stick with us as we take a deep dive into the world of web crawling and web scraping.

If you’re looking for any specific information, use the table of contents below to navigate.

What Is Web Crawling?

Now, you may be wondering, what is a web crawler? Web crawling is using a web crawler to parse web pages over the internet. A web crawler, also known as a web spider or a spider bot, is an internet bot that searches web pages and indexes them. For example, Moz shares how search engines use a crawler to log all the web pages of a site, so its search engine can bring them up when you’re searching.

Web crawling is similar to sorting, cataloging, or indexing items in the real world.

For example, imagine a librarian or an archive manager that goes through multiple books or records. Each book or record that comes into the collection is initially unsorted. Then, some procedure takes place, like looking at the book or record’s category and content information. Ultimately, the item is sorted, or “indexed,” and becomes a known part of the collection.

The whole process makes it easier for people to access and find what they’re looking for. The same is the case for the internet (a huge library as in the example above) and web pages (books).

The size of the internet is too vast to manually web crawl. Fortunately, web crawlers are optimized for this very purpose; a single crawler can vastly outperform a human, crawling multiple URLs per second.

Other than indexing web pages, a web crawler can also validate site hyperlinks. Validating hyperlinks requires clicking on each site link to check that they all work.

In case the web crawler finds a broken link, it will alert the site master to fix it. The web crawler can also validate HTML elements this way and check if there are any broken tags.

What Is Data Scraping?

Exploring the internet and cataloging web pages is one thing, but what if the web page contains some necessary data you wish to extract?

Also known as data scraping, web scraping is used for this exact scenario. Web scraping is the process of extracting data from websites and storing it in a specified file format. The data is usually publicly available and can be stored in multiple file formats.

Some common data extraction formats for web scraping include:

- CSV files

- Excel spreadsheets

- XML files

- SQL scripts

No matter what format the scraper chooses, the goal is to make the data easy to organize and read later on. Since web scraping extracts data at a large scale, it’s essential to store the data in an easily organizable and accessible manner.

You could also web scrape manually. For example, imagine a person copying and pasting some information from a website. This practice is rudimentary web scraping.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

The problem arises, again, with the size of the web. In some cases, more extensive data scraping operations can require parsing through hundreds or thousands of web pages and scraping large volumes of data. Again, we don’t need to explain why this process would be extremely inefficient if a human did it.

Fortunately, a human doesn’t have to scrape web pages manually. We have dedicated web scraper bots for that.

Much like the web crawler, a web scraper — also known as a data scraper — is optimized for scraping large volumes of data at a much faster pace than humans can hope to do on their own.

Web Crawling Vs. Web Scraping: The Differences

Now that we have a basic idea of what web crawling and web scraping are, it’s time to take a closer look at some of the underlying differences between the two.

The short version is that web crawling and web scraping are two different processes with different goals.

For a web crawler, the goal is to parse through as many web pages as possible to index them. Therefore, a web crawler has to go through every page and link on a website to index it properly.

Think about it: When you’re making a map of an area, you need to consider the entire region you’re mapping. Otherwise, your map will be incomplete.

It’s the same with web crawlers. A site can have multiple pages, each of which the web crawler must individually catalog and index. If a crawler skips a few pages, it won’t have complete data, which would defeat the purpose of crawling it.

On the other hand, a web scraper doesn’t need to parse through all the web pages on a site. It just needs to look at the ones where it can scrape data from and extract it. Moreover, web scrapers have a targeted goal. A web scraper for marketing data, for example, will look only at a business competitor’s website and collect the data from there.

Web scrapers are customized to crawl data from a website and collect more specific information than web crawlers do. The programmer who codes the web scraper also configures it to extract data from only specific data set identifiers, such as HTML element structures.

Web crawler use cases

Although indexing and data extraction are the main goals of web crawling, there are multiple use cases for web crawlers beyond indexing.

Let’s have a closer look at some web crawler use cases to help us continue to better understand web crawling vs. web scraping.

1. Web Page Indexing

The most common use for a web crawler is web page indexing. Search engines often use web crawlers for this purpose. As we mentioned before, the goal is to map out as many web pages on the internet as possible.

In the past, search engines indexed web pages through “back-of-the-book indexing,” where they arranged web pages alphabetically or using traditional sorting schemes. This process was slow and produced unreliable search results.

It wasn’t until search engines started using meta-data tags when the search results became more accurate. These tags included information such as keywords, key phrases, or short site content descriptions.

2. Automated website maintenance

Another use for web crawlers is to maintain websites. Rather than manually checking for errors or bugs, a web admin can set up a web crawler to automate the site’s maintenance.

One such task where web crawlers can be helpful is to identify hyperlink navigation errors. For example, if there is a link on the website which users cannot access, the web crawler will immediately identify the link and alert the webmaster. The webmaster can then fix the site block to ensure the hyperlink works for each site visitor.

A web crawler that automatically checks for navigation errors is especially useful for businesses that require their website to be constantly up-and-running, such as banks.

3. Host and service freshness check

Nearly every web page on the internet depends on external hosts and services to run. Unfortunately, sometimes the external applications that provide these hosts and services may face some downtime.

Fortunately, web crawlers are an excellent tool to use to check for freshness, a metric that determines each host and service’s uptime. It’s calculated using a freshness threshold.

A web admin can configure a web crawler to periodically ping each host or service for a website or web page and compare them to the freshness threshold. The results will ultimately determine which hosts and services are “fresh” and which are stale.

4. Web scraping

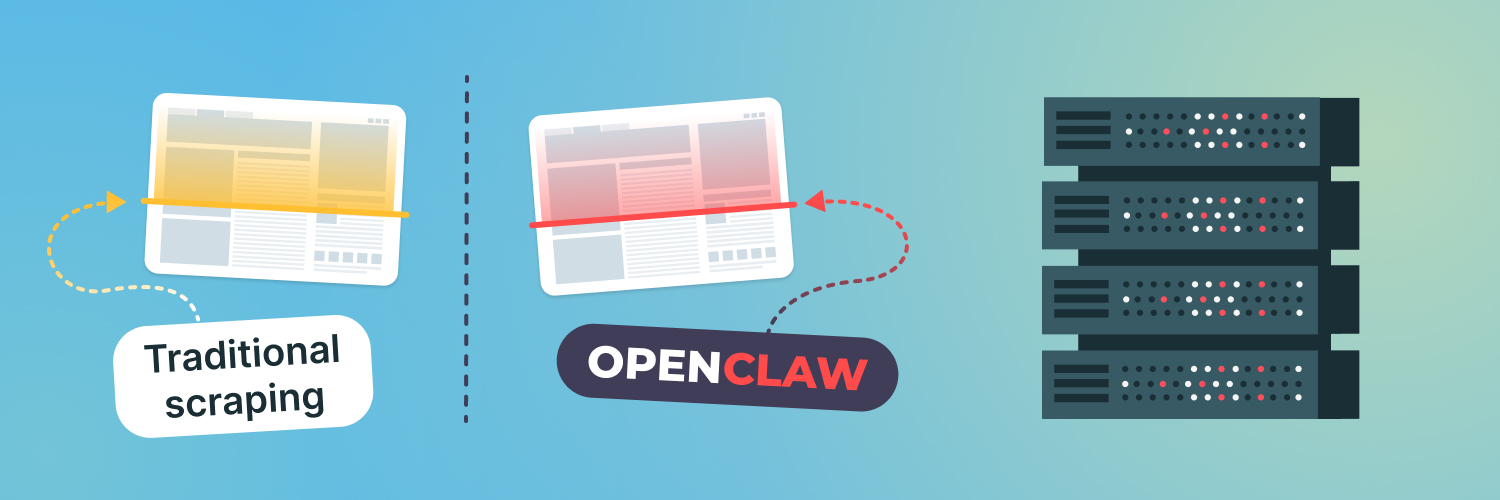

Finally, web scraping itself can be considered a use case of web crawling. This use case highlights an essential aspect of web crawling vs. web scraping: Nearly all web scrapers use web crawlers, but web crawlers don’t need to use web scrapers.

How do web scrapers use crawlers, you may ask? Simple: each web scraper bot often has a built-in module that first crawls the web pages it needs to scrape data from. Without a data crawler to first crawl through the web, a web scraper will have a hard time finding the relevant web pages.

Web scraping use cases

A big difference when considering web crawling vs. web scraping is that web scraping has more extensive use cases than web crawling. Anywhere there is data that you can extract, chances are you’ll be able to use a web scraper.

1. Monitoring market competition

One of the most important tasks for a business owner is to keep track of their competition. Without knowing its competitor’s prices for goods and services, a business will not and cannot stay ahead.

Understandably, it would be difficult to do this manually. In such a case, business owners would need to head over to a competitor’s website and manually search their prices before storing them in a data file.

On the other hand, a web scraper can simply automate this process, saving a great deal of time and resources. Besides pulling market prices, a web scraper can also download product descriptions, reviews, and ratings. Knowing this data can make or break your business in a cutthroat market, so it shouldn’t be a surprise that web crawlers are often used to monitor marketing competition.

2. Artificial intelligence (AI)

A web scraper is a bot, but it’s nowhere near the level of sophistication and complexity of most AI. That said, a data scraper is often a key component of getting large amounts of data to train AI.

Today, machine learning — one of the leading AI techniques — relies heavily on large datasets to train it and make the training model more accurate. But, unfortunately, datasets can be scarce. Programmers and computer scientists alike can therefore use data scrapers to help collect datasets for training their AI.

So, when you use a data scraper to train AI, you’re essentially using one kind of machine intelligence to help build another.

3. Cryptocurrency

The crypto market is one of the most important financial markets in the world today, constantly undergoing changes and updates. As more and more cryptocurrency is mined, crypto prices keep updating. Furthermore, the emergence of new altcoins, tokens, and market trends means that crypto sites are constantly updating.

A web scraper can be a key asset to keep up with market prices and trends. Dynamic data, such as coin prices, is not too difficult to constantly refresh with a web scraper. At the same time, any piece of crypto-news, such as the emergence of a new altcoin or the rise of a crypto token, is easy to monitor with a web scraper.

4. Lead generation

Finally, we have one of the classic applications of web scraping: lead generation.

Whenever a business launches a new product or service, it’s looking to sell as much as it can in the first few months. However, doing so without market leads to back up the launch can be a tricky process. This can be a big obstacle, as generating reliable leads is difficult without customer data.

A web scraper can make the lead generation process much simpler by scraping web pages for publicly available customer data. This data may include the customer’s name, contact details, and profile information.

Types of web crawlers

As we mentioned, different types of web crawlers can be used for different use cases. To better understand web crawling vs. web scraping, let’s look at some different types of web crawlers and how they work.

1. General-purpose web crawler

The general-purpose web crawler is the most commonly used type of web crawler.

The first web crawlers in existence were all general-purpose web crawlers. In the early days of the internet, the goal was for spider bots to index as much of the web as possible. As such, there are no hard and fast rules or restrictions on the first generation of crawlers.

Despite being suited for a range of purposes, general-purpose web crawlers require extensive resources to run, including but not limited to vast system memory and a fast internet connection.

2. Focused web crawler

After programmers built general-purpose web crawlers, they realized they needed web crawlers that were better optimized for specific tasks. This period is when focused web crawlers first came into the picture.

The focused web crawler, by design, narrows its search to only specific topics. Therefore, meta-tags are usually essential elements that specify how these web crawlers work. As an example of a focused web crawler, imagine a spider bot that only indexes car maintenance websites.

Since their scope is much narrower, focused web crawlers are less resource-intensive than most general-purpose web crawlers, using only a fraction of the system memory and internet bandwidth. Although specific businesses often use focused web crawlers, big-name search engines can also use focused web crawlers.

3. Parallel web crawler

What gives faster results than running a web crawler? Running two web crawlers! However, that may be very resource-intensive, given how much computing power just a single web crawler may require.

The alternative, therefore, is to use a parallel web crawler. A parallel web crawler is a single web crawler that runs one or more web crawling processes in parallel. This approach has the advantage of minimizing overhead while maximizing the data download rate.

You may wonder, what if a web crawling process discovers and downloads the same web page as a parallel process? Parallel crawler policy mechanisms keep this from happening. Different parallel processing architectures are based on different policy mechanisms. The most popular parallel web crawler architectures are the distributed crawler and the intra-site parallel crawler.

4. Incremental web crawler

So you’ve already crawled a website and indexed all its elements. However, there’s now a problem: The website either updated the web page or removed it entirely. How do you account for this difference?

Fortunately, this problem has been around since the early days of web crawling and already has a solution: the incremental web crawler. Instead of searching for new pages to index, incremental web crawlers track changes across previously indexed web pages.

They do this by periodically visiting indexed sites to check for changes and updating their index accordingly.

Incremental web crawlers are helpful in tracking web content that frequently changes, like news websites, blogs, or price indices.

5. Deep web crawler

The last major type of web crawler is the deep web crawler, also known as the hidden web crawler.

Typically, a web crawler can only access the surface web, which consists of the internet that we can normally see and interact with. The surface web is easily reachable through hyperlinks so that a search engine can index surface web pages.

There is also a deeper part of the web that is not normally accessible, known as the deep web. Since you can’t access the deep web through a hyperlink, a deep web crawler must use other methods to get there. These methods may include using specific keywords or site registration to obtain special user access.

Types of web scrapers

When it comes to web crawling vs. web scraping, there are more types of web crawlers than there are web scrapers. Most web scrapers more or less follow a similar architecture. However, they may differ depending on the data scraping technique in use.

1. Manual scraper

By “manual data scraper,” yes, we do mean a person copying and pasting data from web pages to a data storage file. Although not even a bot, a manual data scraper still technically counts as a web scraper.

There are some advantages of scraping data manually and not using an automated data bot. Most of the anti-scraping measures don’t work on humans. That said, the disadvantages of manually scraping far outweigh these benefits. It’s a very time-consuming process; even the slowest web scraper is several hundred times faster than a human.

2. HTML parser

The most basic type of web scraper is perhaps the HTML parser, which targets basic HTML web elements to look for data structures to scrape.

HTML parsers can target both static or dynamic web pages. They also work for both linear or nested HTML pages. This kind of web parser is helpful for various purposes, such as extracting texts, hyperlinks, email addresses, or even screen scraping.

3. DOM parser

The Document Object Model, or DOM, is a W3C (Worldwide Web Consortium) standard that defines how HTML documents are stored. The Document Object Model is cross-platform and language-independent; you can access it regardless of the programming language you use. The overall structure of the document is a tree that is arranged logically with different nodes.

The DOM parser scrapes data by taking advantage of the DOM structure. Each node in a DOM is an object, such as a JSON object, representing a part of the document.

Web scrapers that use the DOM parser can easily use tools like XPath to scrape web data. In addition, most modern browsers support the DOM. The headless versions of these browsers are, therefore, a good choice for implementing a DOM parser.

4. Vertical aggregators

Vertical aggregators use a slightly different data-harvesting technique. Some companies have developed specific harvesting platforms for data. These platforms use vertical data aggregation and create multiple bots for each vertical. The goal here is to keep humans out of the loop entirely.

As such, some data scrapers may use the vertical aggregator structure to their advantage. Ones that do are usually more robust, as they are scalable and can retrieve multiple fields of information instead of a single value. They can also be an advantage for the long tail of websites, which would otherwise require heavy resources to scrape data from.

Several other types of web scrapers also exist, each of which uses different tools or technology. Therefore, when looking at web crawling vs. web scraping, it’s safe to conclude that there are many more types of web scrapers than there are web crawlers.

Try Our Web Scraping API

The easiest way to get your project started!

How web crawlers work

By now, you should be well-versed in the whole web crawling vs. web scraping distinction. One important piece of the puzzle that’s missing from our story, however, is the basic idea behind how web crawlers work. Here, we’ll provide a high-level overview of how a typical spider bot operates.

First, it’s important to understand the structure of the web and how it’s laid out. Every website on the internet is basically a collection of web pages with a specific domain name hosted on a web server.

Each web page is built from HTML and HTML structures. These structures may include CSS elements, HTML tags, JavaScript code, and the webpage meta-data, such as keywords or descriptive phrases.

Search engines that deploy web crawlers are well aware of this structure and program the bot accordingly. They will configure a web crawler to focus on particular attributes from the meta-data. The crawler will only focus on the specified attributes and selectively crawl web pages accordingly.

At the heart of any web crawler is a URL seed. The seed serves as the starting block for the crawler and will later generate new URLs for the crawler to parse. Using a seed is necessary; the web is too large to explore otherwise without some kind of starting point.

The seed, in this case, is a list of pre-indexed URLs. As each URL can point to other URLs, the crawler will follow those hyperlinks, then the following generated hyperlinks, and so on until it stops.

The crawler effectively needs two lists to keep track of its indexing: a list of the URLs it has already visited and a list of URLs it plans to visit. Since it also has to maintain an order to the list, the crawler uses the queue data structure.

Therefore, there are two queues: one of the URLs already visited and indexed and one of the URLs that have to be visited. The exact data structure implementation may vary depending on the type of web crawler you’re using.

Other than this basic structure, there are also policies in place that determine which web pages the crawler has to visit and their order of visiting. Policy control mechanisms are an important part of every web crawler.

Not only do policies prevent excessive load on a site’s servers by restricting the number of requests every second, but they also stop your crawler from endlessly crawling through web page after web page.

There are several policy implementations in place in web crawling, which can vary depending on the type of web crawler and the web crawling application. For example, a crawler may prioritize web pages with a high visitor count. This priority is because a web page with a high visitor count will most likely have other outbound links that the crawler needs to index.

Similarly, a crawler can also use the web page’s in-degree centrality as a rule, prioritizing web pages that are referred to by many other web pages. An in-degree, in graph theory, is a measure of how many edges are pointing to a single node. In this case, the nodes are the web pages, and the edges are hyperlinks.

The idea is that, if many pages point to a single web page, the page they all point to will be significant. This idea is similar to how a publication with a high citation count will often be important in that field.

The exact policy implementation varies across search engines, as each has its own set of rules and crawling goals in place. Usually, proprietary algorithms specific to a particular search engine are in place to determine these policies. The policies are also helpful for checking web page updates, so a crawler can know when to visit a web page next.

How web scrapers work

When looking at web crawlers vs. web scrapers, the other part of the puzzle is knowing how web scrapers have worked. Most web scrapers already have a crawling module or API built into them, so you’re already familiar with a key component of how scrapers operate.

Each web scraper is essentially a data scraper with three major code components:

- A web crawling component for sending a request to a target website.

- A function for parsing and extracting that data.

- A function for saving and exporting the data.

The web crawling component doesn’t have to be a full-blown web crawler; as long as it can provide the spider bot functionality, it should suffice for your data scraper.

Consider, for example, part of a web crawling function that we have coded for our C# web scraper:

public async Task GetWebPageData()

{

string webPageURL = "https://rayobyte.com/";

HttpClient client = new HttpClient();

var response = await client.GetStringAsync(webPageURL);

ParseHtml(response);

}

We modified the code for our example above, but you can still see the basic logic in action. First, an async function declares a new string data type that stores the target web URL, similar to our web crawler’s seed.

A response variable then waits until the HttpClient pings the webPageURL by sending a request to the target website. You can see the full code and how the ParseHtml() function works in the full article linked above, but for now, you should be able to see how it works in our web parser.

Once the web crawler has obtained the data, it will store it in a data structure, such as an array. The data structure then copies the elements to a data storage file, such as a CSV (comma-separated variable list) file. Different web scrapers can store the data in different file formats.

There may be smaller steps involved alongside the three main steps that we highlighted above. Ultimately, the end result is always a data storage file containing the scraped data from the target websites.

Web Crawling Vs. Web Scraping: Advantages and Disadvantages

One last thing to consider while looking at web crawling vs. web scraping is the set of advantages and disadvantages each process has to offer. These also include some common challenges associated with both practices. Here is our breakdown of the web crawling vs. web scraping advantages and disadvantages:

Web crawling

Pros:

- Efficient web indexing method

- Good tool for automatic site maintenance

- Does not download datasets, so limited data privacy concerns

- No need for large storage space, as there’s no data scraping

Cons:

- Limited use cases

- Can cause excessive server bandwidth consumption from crawling each site page

- May lead to IP bans and blocklists through anti-scraping measures

- Special exceptions are needed for non-uniform web structures

- Needs specific context for indexing; meta-data mislabelling can negatively affect results

Web scraping

Pros:

- Multiple use cases; wherever there is data or datasets, you can potentially scrape them

- Fast method of downloading datasets, especially with automated web scrapers

- Does not need meta-data context, because it’s implied within the code

- Does not need to parse through each and every site page, only the ones with relevant data

Cons:

- Negatively viewed by some web admins, who see data scraping as unethical

- Can cause excessive server bandwidth consumption if the bot doesn’t limit site requests

- May lead to IP bans and blocklists through anti-scraping measures

- Highly susceptible to data spoofing; fake or tampered data meant to mislead scrapers can negatively affect results

- Programmers need to implement good crawling patterns to mimic human behavior, otherwise sites can detect the bot

How To Improve Your Web Crawler and Web Scraper

Looking at the various advantages and disadvantages of web crawling vs. web scraping, you might be wondering if there is a way you can improve your web crawling, web scraping, or both. Since both web crawling and web scraping share the same disadvantages, such as IP bans and blocklists, a solution that helps one could also help the other.

Fortunately, there is a way you can lessen the impact of most of these disadvantages: using web proxies. Web proxies can constantly change your IP address, so you don’t trigger the usual scraping traps that often lead to IP bans and blocklists, like honeypots.

The problem with typical proxies is that you often have to change the IP address manually, so staying on one address for too long can still result in IP bans.

Fortunately, there is a better way to implement proxies in your web scraping: Rayobyte rotating residential IPs.

Rayobyte rotating residential IPs are your one-stop solution for all your proxy needs. Rather than having to manually swap your IP address to prevent detection from anti-scraping logic, Rayobyte rotating residential IPs automatically handle IP address rotation. The solution also comes with an easy-to-use dashboard that can help you manage all your proxies.

Additionally, Rayobyte proxies come pre-built with an API for proxy management. You can easily configure this API with any web crawler or web scraper bot, rather than going the old-fashioned route and hard-coding the proxies in your application.

Depending on your use case, Rayobyte data center proxies might be a better option for your enterprise needs.

The Rayobyte data center provides users with over 25 petabytes of data-handling per month. This high throughput handling makes it an excellent choice if you have a website with many visitors and massive data that needs handling.

Rayobyte Datacenter Proxies also use an autonomous system number (ASN). The main advantage of using an ASN is greater redundancy; even if a web admin blocklists or bans an entire ASN of IP addresses, you still have over 9 ASNs to work with from the Rayobyte Datacenter.

With over 300,000 IP addresses in over 29 different countries to choose from, including the US, UK, Germany, France, and Canada, you’ll have no shortage of IP addresses in different locations to choose from for your web scraping.

Whether you choose to use Rayobyte’s rotating residential proxies or data center proxies according to your use case, your web scraper or crawler will be free from most anti-scraping measures. You can rest easy knowing your bot will be safe from honeypots, and other bot traps with Rayobyte.

Final Thoughts

Although web crawling and web scraping may seem similar at first glance, the two are separate processes. At the basic level, a web crawler indexes web pages, while a web scraper downloads data.

Other than this fundamental difference, there are other differences to consider while looking at web crawling vs. web scraping. These differences include the use cases and types of bots, which lead to the difference in advantages and disadvantages between the two.

No matter what type of bot you use for web crawling vs. web scraping, we recommend you use Rayobyte rotating residential IPs and Datacenter IPs. Once you implement both solutions in your crawler or scraper bots, you’ll be on your way to preventing IP downtimes and bans in no time!

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

The information contained within this article, including information posted by official staff, guest-submitted material, message board postings, or other third-party material is presented solely for the purposes of education and furtherance of the knowledge of the reader. All trademarks used in this publication are hereby acknowledged as the property of their respective owners.