How AI Reduces Maintenance Costs In Large-Scale Scraping Pipelines

If you’ve ever managed a large scraping pipeline, you already know that the real challenge usually isn’t collecting the data, it’s keeping the system running once it’s live.

At small scale, scraping can feel fairly straightforward. A script pulls data from a page, parses a few fields, and stores the results somewhere useful. When something breaks, someone updates the parser, reruns the job, and everything moves forward again.

At production scale, things look very different.

Pipelines that collect millions of pages per day quickly become living systems. Websites change frequently, traffic patterns shift, IP reputation evolves, small structural tweaks to a page can quietly break parsers. A retry loop that worked fine in testing can suddenly double your infrastructure costs.

Over time, the majority of engineering effort doesn’t go into building scrapers. It goes into maintaining them.

This is exactly where artificial intelligence is beginning to change the equation. AI isn’t replacing scraping pipelines, and it certainly isn’t eliminating the need for careful engineering. What it’s doing instead is reducing the amount of manual intervention required to keep those systems healthy.

When applied thoughtfully, AI can help teams detect problems earlier, adapt to site changes more quickly, and automate many of the maintenance tasks that previously required constant attention.

Let’s explore how that works in practice and why AI is becoming an increasingly valuable tool for teams operating large-scale scraping infrastructure.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

Why Scraping Maintenance Becomes Expensive at Scale

Before looking at how AI helps, it’s worth understanding why scraping maintenance becomes such a large operational cost in the first place.

Large scraping systems interact with thousands of websites and millions of pages. Each of those sites evolves independently, often without warning. Every time one of those changes affects a parser, someone has to notice the issue, diagnose the cause, and update the extraction logic.

At the same time, infrastructure variables are constantly shifting. Even if each individual problem is small, the cumulative effect can become significant. Maintenance becomes a continuous process rather than an occasional task.

Teams often discover that keeping pipelines running smoothly consumes far more time than building them in the first place.

How AI Changes the Maintenance Model

Traditional scraping systems rely heavily on static logic. Parsers are written to extract specific elements from specific structures. Monitoring rules detect obvious failures, such as requests that return errors or timeouts.

AI introduces a more adaptive layer to this process.

Rather than relying entirely on fixed rules, machine learning models can analyze patterns across large volumes of scraping activity. They can detect subtle changes in page structures, identify unusual behavior in traffic patterns, and predict when certain components of the pipeline are likely to fail.

This shift doesn’t eliminate the need for engineers, but it allows those engineers to spend less time reacting to routine issues and more time improving the system itself.

The result is a pipeline that requires fewer manual interventions to stay healthy.

Detecting Site Changes Earlier

One of the most common sources of maintenance work in scraping pipelines is structural changes to websites.

When a page layout changes, even slightly, parsers that rely on specific selectors may stop extracting the expected fields. In many cases, these failures aren’t immediately obvious because the request itself still succeeds. The scraper retrieves the page, but the data extracted from that page is incomplete or misaligned.

AI-based monitoring systems can detect these changes much earlier.

By analyzing the structure of pages over time, machine learning models can identify deviations from historical patterns. If certain elements suddenly appear in different locations, or if expected fields start returning empty values more frequently, the system can flag the anomaly automatically.

Instead of waiting for downstream dashboards to reveal missing data, engineers receive early signals that a particular site structure has changed.

Catching these issues early dramatically reduces the time required to fix them.

Automating Parser Adaptation

Another area where AI is making a noticeable difference is parser maintenance.

Traditional parsers rely on manually written rules that target specific selectors or HTML structures. When those structures change, the parser must be rewritten by hand.

AI-assisted extraction models can help reduce that workload.

These systems analyze the visual or structural relationships between elements on a page rather than relying solely on fixed selectors. If a price field moves from one location to another but retains similar contextual patterns, the model may still recognize it as the same data element.

This doesn’t mean parsers become completely self-healing; engineers still need to validate outputs and adjust logic when major changes occur. However, AI-assisted extraction can significantly reduce the number of manual updates required when sites evolve gradually.

For large pipelines scraping thousands of domains, even a small reduction in parser maintenance can save a considerable amount of engineering time.

Improving Traffic Management

Maintenance costs also arise from infrastructure inefficiencies.

When scraping systems encounter increased failure rates, teams often respond by increasing retries or expanding proxy pools. While this can temporarily restore success rates, it can also inflate bandwidth usage and proxy costs.

AI can help identify the root causes of these performance changes.

By analyzing traffic patterns, success rates, and latency across different regions and endpoints, machine learning models can detect when certain IPs are beginning to experience reputation degradation or when specific endpoints are becoming more sensitive to request frequency.

Instead of relying on broad adjustments, the system can recommend targeted changes to rotation strategies or concurrency levels.

This kind of insight helps teams maintain performance without unnecessarily increasing infrastructure spending.

Reduce Scraping Maintenance Costs

Use AI-driven monitoring and smarter infrastructure to keep your pipelines stable at scale.

Reducing False Alarms in Monitoring

Large scraping pipelines generate enormous volumes of monitoring data. Requests succeed, fail, retry, and complete across thousands of endpoints every minute.

Traditional alerting systems often rely on simple thresholds, such as failure rates exceeding a certain percentage. While these thresholds are useful, they can also produce false alarms when traffic patterns fluctuate naturally.

AI-based monitoring systems analyze patterns over time rather than reacting to isolated spikes.

By learning what “normal” traffic behavior looks like across different parts of the pipeline, these systems can distinguish between routine fluctuations and genuine anomalies.

The result is fewer unnecessary alerts and more accurate detection of real issues.

This improves operational efficiency because engineers spend less time investigating problems that aren’t actually problems.

Predicting Infrastructure Stress

Another area where AI can reduce maintenance costs is predictive infrastructure analysis.

Large scraping pipelines often encounter performance bottlenecks when traffic scales unexpectedly. A new endpoint might generate higher concurrency than anticipated, or a change in site behavior might increase request latency.

Machine learning models can analyze historical traffic data to identify patterns that precede these stress points.

For example, if certain combinations of request volume and retry rates historically lead to performance degradation, the system can alert engineers before the issue becomes critical.

Predictive monitoring allows teams to adjust infrastructure proactively rather than reacting to outages after they occur.

Improving Data Quality Validation

Maintenance doesn’t only involve keeping pipelines running, but also making sure that the data collected remains accurate.

Even when requests succeed and parsers return values, subtle errors can still occur. Prices may shift into the wrong fields, units may change, or certain attributes may disappear temporarily.

AI-based validation systems can analyze datasets to detect unusual patterns that might indicate extraction issues. For example, if a product category historically contains prices within a certain range and suddenly begins returning values far outside that range, the system can flag the anomaly.

These types of checks help teams maintain data quality without manually reviewing massive datasets.

Where Human Expertise Still Matters

Despite its advantages, AI isn’t a replacement for experienced engineers.

Scraping pipelines interact with highly dynamic environments, and human judgment remains essential when designing extraction logic, defining ethical boundaries, and interpreting unusual patterns.

What AI does best is assist with the repetitive aspects of maintenance. It surfaces anomalies earlier, automates certain adjustments, and reduces the volume of routine tasks that engineers must perform manually. In practice, the most effective scraping teams combine strong engineering foundations with AI-driven monitoring and analysis.

That combination allows pipelines to remain stable while minimizing ongoing maintenance costs.

The Long-Term Impact on Scraping Operations

As scraping infrastructure continues to grow in scale and complexity, maintenance efficiency will become an increasingly important competitive factor.

Teams that rely entirely on manual monitoring and parser updates will find it difficult to keep up with rapidly evolving websites and expanding data requirements.

Teams that incorporate AI into their pipelines will be able to detect problems earlier, adapt to changes faster, and operate with leaner engineering resources.

Over time, the difference between those two approaches becomes significant.

Reducing maintenance overhead allows teams to focus more energy on improving data products and less on fixing broken infrastructure.

Working With Rayobyte

At Rayobyte, we work with teams that operate some of the largest scraping pipelines in the world, and we’ve seen firsthand how quickly maintenance costs can grow as systems scale.

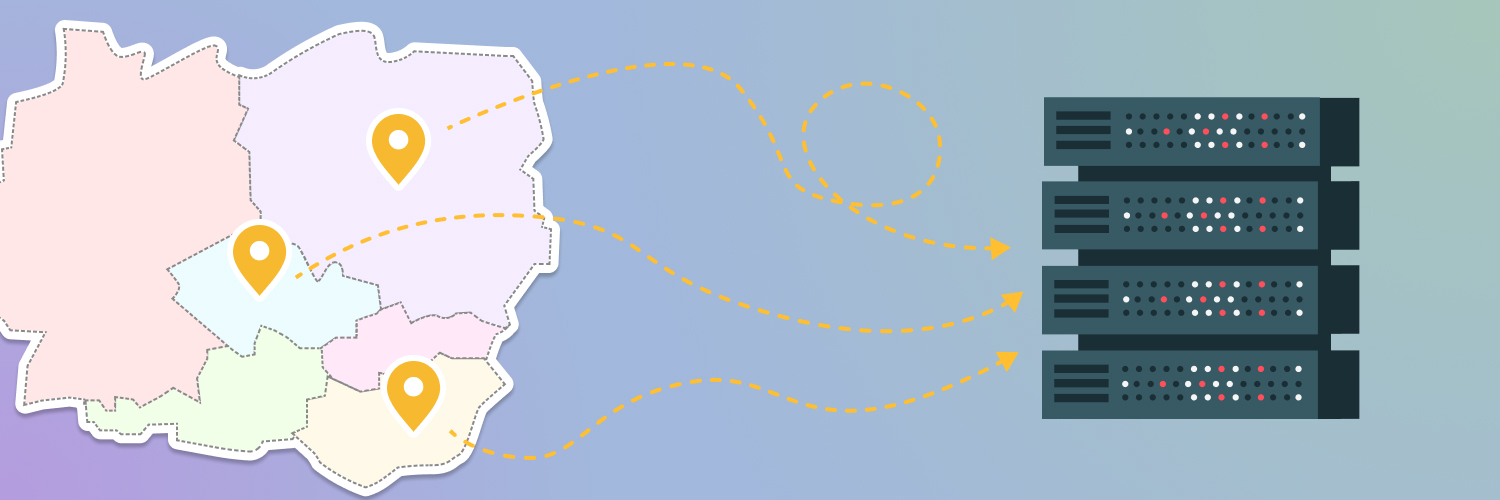

Our proxy infrastructure is designed to support stable traffic distribution, accurate geolocation, and flexible rotation strategies so that pipelines can operate predictably even as workloads expand. By maintaining large, well-managed proxy pools across datacenter, residential, and mobile networks, we help teams reduce the infrastructure friction that often leads to maintenance challenges.

Beyond providing proxies, we work closely with customers to analyze performance patterns, tune rotation behavior, and design architectures that remain resilient as websites evolve. Many teams are also beginning to incorporate AI-driven monitoring and validation into their workflows, and we’re always happy to help customers think through how those systems integrate with their proxy strategies.

Scraping pipelines perform best when infrastructure, monitoring, and automation work together. When those elements are aligned, teams can spend less time maintaining systems and more time building the data products that depend on them.

If your team is scaling a scraping pipeline and looking for infrastructure that supports long-term stability, we’re always happy to help you design a setup that grows alongside your workload, get in touch now.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.