Python Windows Automation: Run Python Scripts With Windows Task Scheduler

Web scraping allows businesses to quickly obtain, analyze and interpret data from websites. It helps them better understand competition, market trends, customer behavior, and more. Web scraping can also help businesses identify potential opportunities in their industry and new areas of growth. Read more about Python Windows Automation.

A scripting language like Python can scale the process by gathering data across many different sources or websites. Involving well-defined Python libraries such as BeautifulSoup or Selenium improves data retrieval and automates tedious processes like logging into websites or navigating pagination. When you combine it with the scheduling functions provided by Windows Task Scheduler, you can create automated scripts that collect data regularly without needing much intervention. This Python and Windows automation combo is ideal for getting both small and mid-sized companies organized faster using less human effort.

This guide will cover the how-to’s you’ll need to plot your own web scraping operation via Python and Task Scheduler. Even beginners or less technical users can handle it, so smaller organizations won’t need extensive technical capabilities to get involved.

Why Python Is a Good Choice for Web Scraping

Python is excellent for web scraping due to its expansive libraries and platform independence. Libraries, especially “BeautifulSoup” (which specializes in data mining), make parsing information from websites easier and faster. Scrapers written in Python are portable, meaning they can run on almost any platform — Linux or Windows — without needing significant alterations.

The syntax is intuitive, so newcomers can learn the language quickly. Modern versions of Python are also more expressive than other languages, such as Perl or C++, so development time is drastically reduced. Complex tasks require minimal code compared to other programming languages. Its highly efficient code base makes it easy for developers of any level to create robust custom applications — such as automated web scrapers — without the overhead of more complex programming languages.

Python also offers other capabilities like URL requests, XPath selection, LXML parser, and more, so users have greater control when working with scraped data.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

The Fundamentals of Web Scraping Using Python

There are a few protocols that govern how data is transferred online. Understanding how they work can help you decide which ones to specify for your web scraping needs. You’ll also want to understand the server’s underlying code so you can identify which parts of it you can safely neglect. You don’t need every bit of data, and you don’t want to waste time scraping irrelevant code, such as tags meant for design. And once you retrieve that data, you often need to parse it or make it readable by human users.

There are also certain website features to keep in mind when web scraping with Python. Some, like pagination (next page, previous page, etc.), will require additional automation. Otherwise, you’ll need to manually click “next page” instead of automating everything.

Requests protocols

When you need to access anything online, you’re performing a request. A request is sent from your computer (i.e., the client) to a web server (i.e., the website or app), typically to view content. The server responds with what’s technically known as a response, which includes the information you want.

Several different request protocols can be used for web scraping requests, each with benefits and drawbacks. The most commonly used protocols include:

- HTTP: HTTP is the least secure method of accessing web pages as it does not support encryption. However, it remains one of the simplest options.

- HTTP Secure (HTTPS): HTTPS is the more secure version that encrypts user data in transit. But it can be slower and more resource-intensive since the encryption and decryption process adds time to data transmission.

- File Transfer Protocol (FTP): FTP is faster and more reliable for transferring large files, as it bypasses encryption and decryption. However, it should only be used in cases where the user and server completely trust each other or there’s zero sensitive data transmitted. FTP is also better suited for applications that require simultaneous access to files, such as file sharing.

With a basic understanding of how these protocols transfer data between different points on the internet, users can build highly-efficient scraping techniques.

This leads to the next concept: the actual code structure of a source website.

HTML and CSS

Browsers use HTML to interpret a webpage’s content, while CSS (Cascading Style Sheets) format everything else, such as text sizes, colors, fonts, and more. HTML files provide organized code that can be read by humans and programs like Python and manipulated for certain activities or tasks.

After downloading pages with protocols using requests, you need to understand how computers view the retrieved data, so they’re processed properly by your Python programs. The content you need to scrape is often wrapped in HTML tags within downloaded pages. Some of these targets include the HTML elements below:

- Divs containing “IDs” & “classes”

- Anchors linking URLs inside elements called “hrefs” (often embedded inside list items “<li>”)

- Table data within rows ” <td>” and columns “<tr>”

- Paragraph tags denoted by “<p>”

- Images referenced through image source attribute (“<img src =>”)

All these are found deep within the HTML of web pages you scrape. Aside from familiarizing yourself with these tags, you would also do well to understand the selectors associated with CSS. Key selectors can help you better navigate HTML code at higher levels. More advanced websites (those designed with CSS3 and JavaScript, for instance) will require more nuanced scraping.

Once you understand where the content you’re after is located, you can plan how to parse them.

Parsing

Specific libraries, such as BeautifulSoup and LXML, simplify the readability of HTML documents by creating “parsers” that help decide which parts or paths within code need exploring.

BeautifulSoup is a popular library for parsing web sources written in Python, and it works well with standard and complex code. LXML is an alternative library that uses XPath and Xquery languages (XPath being the most common). Both these auxiliary programs speed up particularly intricate procedures by allowing you to process your scraped information more accurately. If you need to extract information from a large amount of code containing various nested elements, LXML’s XPath or Xquery languages can simplify the process of navigating through the code structure and quickly locating the desired data. For example, it allows you to create search criteria like “find all nodes which contain content with a specific keyword” or “locate all elements within an array.” This makes it easier to narrow down what needs processing without manually inspecting each element. Additionally, LXML libraries are optimized for speed and memory efficiency, making them ideal for performing large-scale searches in minimal time.

BeautifulSoup is great for extracting data from tags in HTML documents, while LXML might be better suited for complex XML files or nodes with elements inside them. It is important to choose the library or parser that best fits your project and maintain consistency when coding. This can help you optimize results when using Windows Task Scheduler because you can separate specific scraping tasks based on the source websites. If you’re scraping websites that require BeautifulSoup, for example, you can group them into one script and one Task Scheduler task and recurring schedule.

Automating website access and trigger activity

One last important factor you need to consider is automating interactions with websites.

Selenium is a Python library used to automate what would otherwise be manual interaction with a website’s graphical user interface (GUI) through a scripting language. It allows users to control browsers programmatically, i.e., make the computer automatically interact with website interfaces. That way, the computer won’t require human input to trigger certain actions when processing data from websites.

Selenium can be used in tandem with other libraries, such as BeautifulSoup or LXML, during the scraping process or simply on its own for automation purposes.

Python code can automate the entire process explained above using a variety of easily accessible features, libraries, and packages.

Using Windows Task Scheduler for Automated Data Retrieval

Windows Task Scheduler is a system-level program that allows users to launch programs or scripts at specific times, recurring dates, and intervals without manual intervention. It also has advanced features accessible in its dashboard, like adding triggers that initiate tasks for a specific event.

It can save time and effort by automating tedious scraping tasks such as visiting specific websites, filling out forms, reviewing data sources, and extracting content from website code. This makes it especially useful for small to mid-sized businesses that don’t have extensive technical experience or the time or staff to do it manually.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

To set up a new run command and create triggers in the Windows dashboard, open the Start menu and select “Task Scheduler.” You will add a task by clicking on “Action” in the menu and selecting “Create Basic Task.” This will prompt you to name your task and provide details about when it should run, what type of program is involved (in this case, Python), and which script(s) to execute using arguments if needed. After entering this information, click “Next” to configure any necessary triggers before saving the changes.

Task Scheduler can also execute reactive algorithms when particular conditions are met. This means you don’t have to manually update the algorithm or code it again. It will react automatically when certain conditions, such as changes in an input file (like a web page), are detected. Windows Task Scheduler is designed to identify such changes and make corresponding adjustments according to preset parameters. For example, you could have a web scraping program written in Python that uses Pandas to collect data from a website. This same code can then be used to export the collected data as an Excel file. You could also set up Windows Task Scheduler to execute the same code daily without manual intervention or updates. This means that your program will look for changes in the website’s content each day and automatically update your saved output accordingly.

With these advanced features, you can quickly automate simpler but more frequent web scraping tasks across many machines. You’re saving substantial time and effort over the long term, increasing productivity rates. If your processes stay consistent, you also ensure data accuracy every time you scrape a web page.

If you need an alternative to Windows Task Scheduler, you can try freeware such as Z-Cron Scheduler.

Creating Simple Auto-scheduling Scripts With Python and Windows Task Scheduler

With the overview out of the way, it’s time to focus on how small to mid-sized businesses can leverage Windows Task Scheduler and Python scripts to automate their web scraping. By the end of this section, you’ll have a basic framework for setting up automated data retrieval tasks with your very own Python scheduler.

Setting up your Python script

Prep the Python code the Task Scheduler will be running:

- Install relevant packages such as BeautifulSoup4 and LXML (remember, for parsing content?) via PIP (a package manager). You’re going to need them later.

- Create a command line script in your preferred text editor (e.g., Notepad). Use #!/usr/bin/env Python as the first line of your program file — this tells the system what language you’re using — then write whatever automation code you want. Name it something descriptive and memorable, such as “webscraper_script.”

- Save it with the .py extension inside a scripts directory you can place in any folder (it doesn’t matter where, but exercise due diligence when managing directories).

- Open a CMD or Powershell window from the same location and run the command pip freeze > requirements2xshell. This will freeze all dependencies used by Python into a separate file called requirement2xshell, which you should also save inside the scripts directory next to the project’s files (.py files).

Now you have everything ready to set up your task scheduler job. Read our guide on Python web scraping for a more detailed breakdown of web scraping scripting with this programming language.

Creating tasks and triggers in the Task Scheduler Console

To set up an automated web scraping task with Python, first launch the Windows Task Scheduler application on your device. Then click “Create Task” and provide a relevant name and description of this operation. Select when you want the web scraping requests to run by defining the frequency in hours, days, or weeks. Configure any necessary logging settings for particular sessions from the drop-down panel by selecting supported options (optional).

Now head over to “Actions.” Choose the “Start a Program” option as an action type, then add a full path to the main “webscraper_script” (or whatever your automated Python script name is). Enter the parameter –b to indicate which libraries or modules the Python script should load when it runs. This is useful for ensuring that extra library functions and processes can run a web scraping task as intended. Finally, there’s an input area where you can list other module names you might need if you have the libraries installed.

To run our web scraper at the desired intervals, we need to set up tasks and triggers in the Windows Task Scheduler Console. In the Console, navigate to the “Triggers” tab. Here you can configure manual triggers that occur at specific intervals of time or data events. You can also run multiple instances in parallel should your business require larger-scale web scraping capabilities. Once all configurations have been properly set up and tested, save your changes and run the task.

There are other ways to set up Task Scheduler jobs. One such method is to create a separate batch file identifying every script required in the automated task. This approach lets you choose which Python environment you want to use, giving you more flexibility. However, the method above is more direct, as it only requires that your Python script is in the appropriate directory.

Adding more functionality to your automated Python scripts

Once an automated web scraping task has been set up with Windows Task Scheduler, the Python script can initiate a web request for targeted data. If you’re dealing with unstructured HTML or CSV files, libraries such as “BeautifulSoup” and “Pandas” could provide easy-to-use functions for formatting raw data into usable information.

Libraries like these can take almost any type of file, including XML, JSON, and PDFs (among others), and quickly obtain structured output files or APIs. There are also ready-made Python packages online that help streamline universal web scraping processes, so small businesses don’t have to build them from scratch. After all, these require significant time investments upfront.

Additionally, APIs such as those from Google provide the necessary tools to plot real-time data points like stock market prices in a matter of minutes. Consider adding these into your Python script, as well, if they’re relevant. Properly configured and coded API usage can filter topics based on keywords and make use of ready-made libraries, significantly reducing manual steps.

You can also include strategies for using a proxy server (e.g., rotating proxies) in your Python script. Or, if you use software like a VPN, you can add the appropriate steps to another Task Scheduler task.

A few common issues and workarounds

It might seem straightforward, but putting all of this together isn’t without its challenges. Here are some of the most common pitfalls you’re likely to encounter when web scraping and strategies for overcoming them.

Timing issues

Correct timing is one of the most challenging issues with automated scripting. Occasionally, scripts require more time than expected. To overcome this issue, create a script that doesn’t have to load fully to begin and allows different opening and closing times depending on variables or conditions within the code.

Incorrect data extraction

Incorrectly extracting data from websites is common and will significantly slow progress if not resolved quickly — especially when dealing with multiple pages over long periods. The best way to prevent incorrect extraction can be a bit technical:

- Double-check the code regularly.

- Set up throttling parameters that limit the amount of data taken from a website per cycle. These parameters prevent memory overload, which can slow down or strain a computer system. When setting up throttling parameters for web scraping, it’s important to consider how often visits will be made to the source website and if any specific rates need to be adhered to (if provided). Additionally, an efficient way of tracking extracted data sets needs to be implemented so that duplicate results are not generated on subsequent visits.

- Make sure XPath expressions are validated periodically within the source website’s Document Object Model structure. XPath expressions are instructions for the computer to find and extract data from websites. These instructions must remain valid when working with multiple pages over long periods, as webpages often change their content layout or formatting. To ensure this, we need to make sure XPath expressions are validated periodically within the source website’s DOM structure. This will maintain consistency in our extraction so that we get accurate results every time we visit a webpage.

You can Google these terms and your specific issues to find a lot of help from the substantial Python user community.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

Data sanitization

Cleaning up scraped data can be very difficult but also necessary, depending on your application’s needs (for example, filtering out irrelevant listings). Fortunately, several Python tools, such as Pandas, are designed to sanitize large sets of information, and dedicated libraries like LXML make it easier to encode XML documents more cleanly.

If you find your automated code is returning results that are difficult to understand, you will have to dig deeper into the technical side of data sanitization. Fortunately, the Python community at large will again be very helpful, especially for common issues.

Sorting through coding issues while working on auto-scheduling scripts requires constant revisions, closely monitored output performance, and maintenance fixes for intermittent errors. It’s a very involved process. But by following these steps and correctly preparing scripts beforehand, small to medium-sized businesses should have no problem setting up effective automated web scraping projects on their own.

Utilizing Advanced Features in the Windows Task Scheduler Console

Windows Task Scheduler’s advanced features allow for more complex automation through triggers, including scheduled tasks, system events, logon events, and external programs. It provides an interface for adding additional information, such as username and password credentials, that a script or application may require. Additionally, it has options to configure repeat intervals and save task information in XML format for backup or transfer activities.

Advanced Windows Task Scheduler features have additional benefits for more complex or larger scraping projects. For one thing, it can help ensure secure data retrieval processes. The configuration options provide extra security to keep sensitive or confidential information from being exposed during network or web scraping operations.

Setting up automated tasks and triggers in Windows Task Scheduler requires a few simple steps.

- Open the application from the Start menu and create a new task. Fill in relevant information such as name, description, and security options — the usual.

- Select the Triggers tab to set when you want your trigger to execute (based on time or other events). Using scheduled triggers rather than running scripts immediately upon connection adds an extra level of security by delaying the execution window until specified conditions (such as correct login information) have been met.

- Configure conditions for execution by selecting the Conditions tab. This is important for resource management, as it ensures that tasks run when needed based on criteria such as battery life threshold or external program or user action. Doing this allows the system to save CPU cycles when running background processes, especially over increased activity and load periods. Additionally, event-based processing means that tasks will only execute after certain events have been triggered — like a window logon — rather than just predetermined intervals throughout the day. This provides further control and ensures the best use of valuable resources within your computer’s operating system environment.

- Go onto the Actions tab where scripts or applications are specified that need to be executed with each trigger activation (e.g., run program /Script/ Logoff). Utilizing XML backup files, for example, allows for easy transfer between multiple applications while keeping any authentication details encrypted in a format not viewable through conventional means like Resource Explorer tools. This prevents attackers from trying to obtain passwords and other related secrets stored in plain text files.

- Now verify the settings and press the OK button at the bottom. Note that this simplicity betrays how complex you can make your automated scripts. Explore the Task Scheduler Console to see your options.

Further Challenges Small Businesses May Face

Small businesses may run into some other web scraping challenges and should prepare for them.

Data types

One of the main challenges small and medium-sized businesses face when using automated web scraping services is determining which types of data to collect. Web scraping programs can acquire various types of data, ranging from emails and contact information to product descriptions and pricing. Depending on your specific needs, different combinations of data — and therefore different tools for web scraping — may be needed to get all the necessary pieces.

For example, some businesses may only need basic information such as names and emails, while others require an advanced combination that includes complex elements like images or detailed product specifications. Businesses need to understand which type(s) they will utilize to avoid overwhelming their customers’ experiences and wasting time trying to capture too much data at once.

Infrastructure

Small to mid-sized businesses often lack access to the same computing resources that larger organizations have, such as a cloud hosting provider, if their servers’ power or bandwidth falls short. Setting up and maintaining an autonomous setup will become more costly if they have to rent cloud services like Amazon EC2, Microsoft Azure, or DigitalOcean.

In general, there are a few infrastructure-related costs to keep an eye out for:

- Networking costs: If a small or mid-sized business doesn’t already have the necessary infrastructure for its web scraping projects, it will need to budget for network equipment such as routers or switches.

- Dedicated server installation costs: A dedicated server will be needed if businesses are looking into adding more capacity or resources that can handle larger amounts of traffic while ensuring all operations continue without interruption (including during peak times). Installation costs could include upfront hardware purchases, enterprise-level software support down the line, and other maintenance needs such as storage backups and cooling solutions.

- Security expenses: It is crucial that businesses consider security measures when web scraping. Data privacy regulations must also be taken into account when collecting personal information from websites which can cost extra depending on what type of protection services are required: firewalls, antiviruses, SSL encryption certificates, and one-time/annual fees at the hardware and software level.

- Website monitoring tools: Businesses may invest in website performance monitoring services or software that helps monitor, troubleshoot, and alert them to potential server issues. These options could include SaaS offerings or open-source solutions that require one-time setup fees or subscription costs based on usage levels. This can allow businesses to quickly identify any web scraping-related issues and potentially resolve them faster, reducing unplanned downtime.

Smaller businesses must carefully assess these costs against automation strategies before deciding to explore anything beyond web scraping with Python and Windows Task Scheduler for their business needs. More advanced scraping means more monetary and computational resources.

Technical and operational know-how

Smaller businesses are often restricted by their budget and may not have the technical expertise or capacity to manage large-scale web scraping projects on their own. Automating web scraping services requires the right operations to run effectively. These include ensuring reliable infrastructure, understanding how data can be reliably accessed from external sources, data security protocols, maintenance cycles for software updates/upgrades, and more.

Decisions must also be made concerning hiring new personnel and managing third-party service providers, such as proxy servers. Some things to consider include:

- The staffing needs for web scraping services, including roles such as data scientists, software engineers, etc.

- A hiring strategy that factors in both technical aptitude and business acumen experience or providing training and support materials where needed.

- Third-party service providers who can provide reliable infrastructure (proxy servers) along with continuous software updates and support on an ongoing basis.

Troubleshooting and testing issues

You must troubleshoot potential issues with the quality, amount, and speed of data updates. It can be challenging for a smaller organization to balance optimization with maintaining up-to-date databases. Your business must learn how to adjust settings such as frequency of execution to ensure it has up-to-date databases without sacrificing time or resources updating them too often. It also helps to ensure that only relevant and valuable data is collected from web scraping operations, saving time and energy when searching for information on their own.

You’ll also need effective error handling and notification methods so that users are quickly made aware of any errors encountered during automated operations. Add proactive approaches (like routine debugging or maintenance windows) in case something unexpected happens or results need adjusting periodically. Finally, bringing functional testing into play would allow you to measure whether expected functionality works as planned before changing anything.

Using Proxy Servers and Rayobyte’s Web Scraping API Providers

It’s important to ensure your company follows web scraping best practices by using proxy servers and/or web scraping providers. Both strategies can help protect your website’s content and other sites from which you may be attempting to obtain data.

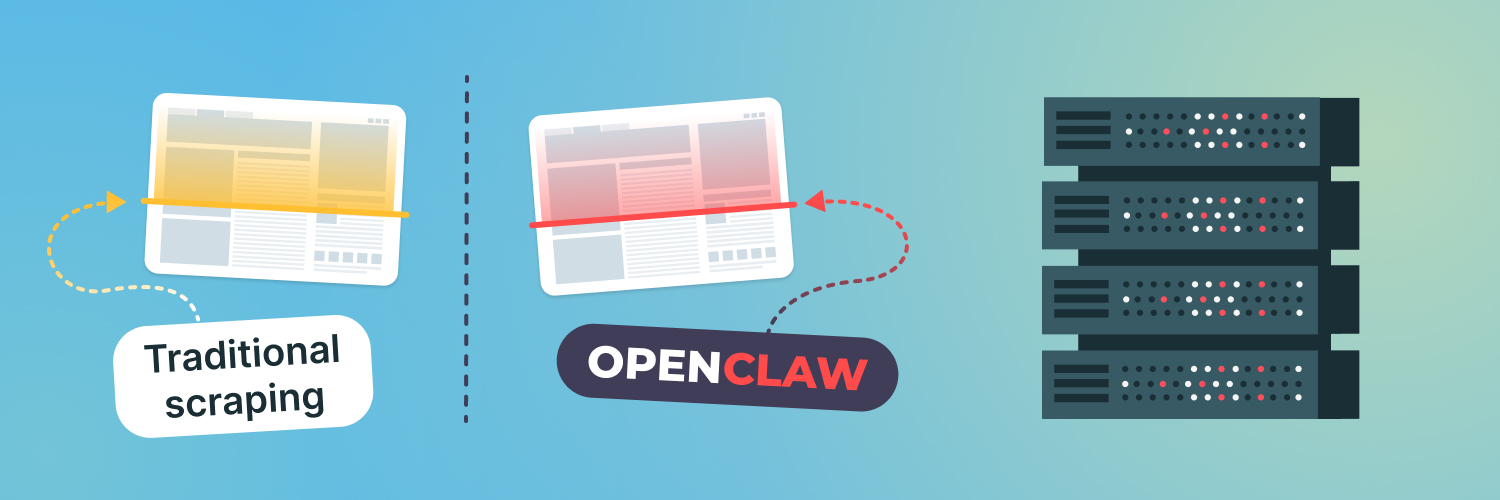

Proxy servers and web scraper providers allow you to securely scrape web data from a variety of sources. Rayobyte’s Web Scraping APIs are automated programs that can crawl web pages, download files, extract content, and store it. Proxy servers act as middlemen between internet users and websites, providing secure access to an external service or website with less risk of being blocked by the target system.

For example, suppose you’re accessing a database that might be storing sensitive customer information on an internal server at your organization’s location. Proxy servers will provide security so that no one outside your network can gain access.

Robotic scraping allows business owners to quickly acquire large amounts of valuable data while protecting their intellectual property rights. Using a third-party provider for web data collection has several advantages. For one, scraping providers are designed to collect and organize data from any website quickly and efficiently. As most web scraping requires users to create custom bots or scripts, outsourcing it can often save time for businesses that lack technical expertise in coding or programming. They provide immediate access to a library of highly specialized bot templates.

Plus, using proxy servers from these same providers is convenient as they offer unlimited IPs globally, which helps ensure user anonymity while increasing the speed of data collection.

Types of proxy servers

Residential proxies are IP addresses established by normal internet service providers and provide users with real-time access that looks like a regular home user surfing the web. This makes them highly efficient for scraping large portions of data without getting blocked by websites since they blend in with all other web traffic.

Data center proxies use specialized servers located at corporate data centers across multiple countries instead of ISPs. Their main feature is increased speed and reliability compared to residential proxies, as well as scalability. Most major corporations own hundreds or thousands of servers worldwide in different locations and run different networks, so user requests don’t go through just one server host location (as they would if they used a residential proxy). Requests made with a data center proxy can go through multiple server host locations before being redirected, resulting in reduced latency and increased connection speeds.

ISP proxies are an intermediate solution that provides URL routing through residential proxy networks while masking as a single ISP provider. Proxies connected to an ISP are a combination of residential and data center proxies. These can be accessed through multiple network sources, allowing one to benefit from the convenience of data centers while harnessing the legitimization associated with using an ISP. This allows a limited degree of customization that can minimize problems associated with occasional latency issues.

A dependable proxy server is key for any successful data scraping operation. Rayobyte offers sophisticated features that can automate certain tasks, making it easier to manage resources and keep scrapers under the radar. Discover our proxies today and take your scraping to the next level.

Final Take

Automating web scraping with Python and Windows Task Scheduler provides many advantages to businesses that need up-to-date information. Aside from the obvious convenience of automated data collection, these programs can be fine-tuned to provide more accurate results than manual extraction. With efficient use of resources and minimal human intervention required, Python and Windows automation is an excellent solution for companies in search of cost-effective ways to manage large amounts of data on tight budgets and without taking too much time out of their busy schedules.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

The information contained within this article, including information posted by official staff, guest-submitted material, message board postings, or other third-party material is presented solely for the purposes of education and furtherance of the knowledge of the reader. All trademarks used in this publication are hereby acknowledged as the property of their respective owners.