How to Use Python to Scrape Google Finance

Google Finance is a valuable resource for investors, analysts, and stock market enthusiasts. It provides real-time stock quotes, financial data, and the latest financial news. This comprehensive platform gathers data from multiple sources to offer a holistic view of the financial industry. With data’s growing importance in financial decision-making, accessing it efficiently is vital. This is where Python comes in.

Python can automate data collection from Google Finance, saving you time and ensuring you have the most up-to-date information at your fingertips.

Try Our Web Scraping API

The ideal tool for effective, fast web scraping.

Let’s explain in detail how to use Python to scrape Google Finance. This is a step-by-step instructions and examples.

What is Web Scraping

Web scraping is a technique used to extract data from websites. It allows you to gather information that is publicly available on the web and use it for various purposes. This includes data analysis, research, and decision-making. For investors and analysts, web scraping can be particularly useful for collecting financial data from sources like Google Finance.

Importance of Proxies for Web Scraping

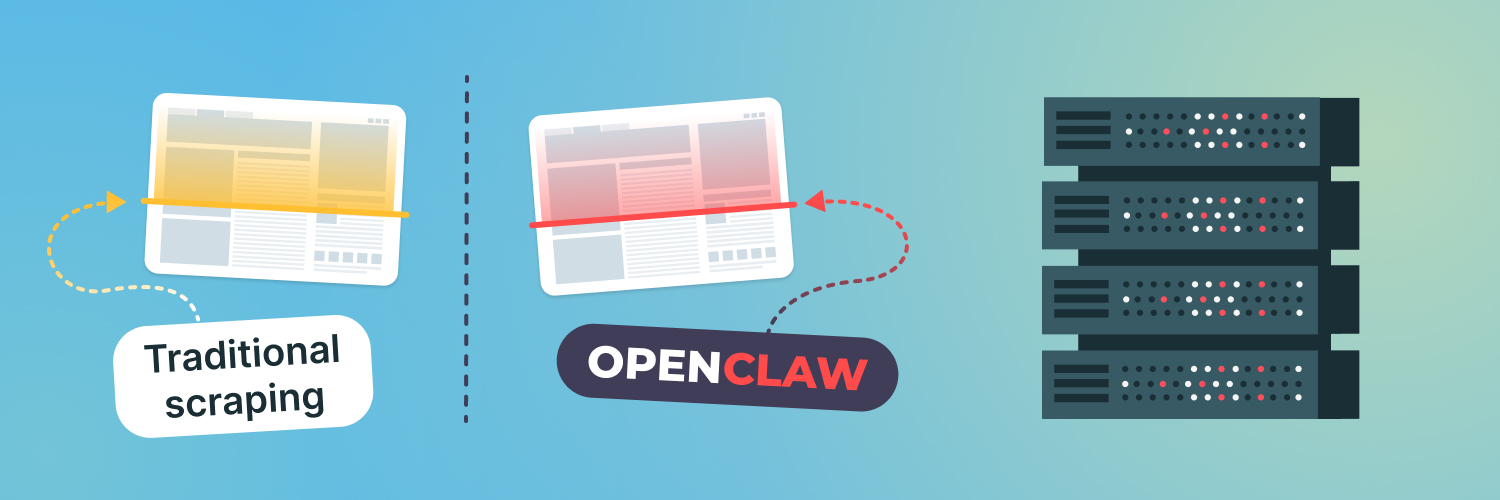

When scraping data from websites like Google Finance, it’s important to use proxies. Proxies help you avoid IP blocks that can occur if you make too many requests from a single IP address. By rotating through multiple proxies, you can mimic human browsing behavior and reduce the risk of being blocked. This ensures that your web scraping activities remain uninterrupted and efficient.

How to Set Up Your Environment

Install Python and Essential Libraries (e.g., `requests`, BeautifulSoup)

To get started with web scraping using Python, you’ll need to install Python and several libraries that will help you fetch and parse data. First, download and install Python. Once installed, you can use the package manager `pip` to install the necessary libraries. Open your terminal or command prompt and run the following commands:

“`bash

pip install requests

pip install beautifulsoup4

“`

The `requests` library will allow you to send HTTP requests to Google Finance, while `BeautifulSoup` will help you parse the HTML content and extract the data you need.

Set Up a Virtual Environment for Python Projects

Using a virtual environment is a good practice when working on Python projects. It helps you manage dependencies and avoid conflicts between different projects. To set up a virtual environment, follow these steps:

- Install the `virtualenv` package if you haven’t already:

“`bash

pip install virtualenv

“`

- Create a new virtual environment for your project:

“`bash

virtualenv myenv

“`

- Activate the virtual environment:

– On Windows:

“`bash

myenv\Scripts\activate

“`

– On macOS and Linux:

“`bash

source myenv/bin/activate

“`

Now, you have a dedicated environment for your web scraping project, and you can install the necessary libraries in it.

How to Write Your Python Script

Now that your environment is set up, it’s time to get into the exciting part—writing your Python script! The first step is to fetch the HTML content of the Google Finance page you’re interested in. For this, we’ll use the trusty `requests` library.

Here’s a fun example script to get you started:

```python

import requests

# Define the URL of the Google Finance page for GOOGL stock

url = 'https://www.google.com/finance/quote/GOOGL:NASDAQ'

# Send a request to fetch the HTML content

response = requests.get(url)

# Check if the request was successful

if response.status_code == 200:

html_content = response.text

print('HTML content fetched successfully!')

else:

print('Failed to fetch HTML content')

```

This script is like sending a friendly knock on Google Finance’s door, asking for the web page’s content. If Google Finance responds positively (status code 200), you get the HTML content of the page.

Fetching HTML content is the first step to gathering stock data with Python. With this content in hand, you’re ready to extract the specific information you need.

Using BeautifulSoup to Parse HTML and Extract Relevant Information

Once you have the HTML content, the next step is to parse it and extract the relevant data. `BeautifulSoup` makes this easy. Here’s how you can use it to extract the stock price:

```python

from bs4 import BeautifulSoup

soup = BeautifulSoup(html_content, 'html.parser')

price_element = soup.find('div', class_='YMlKec fxKbKc')

if price_element:

stock_price = price_element.text

print(f'Stock Price: {stock_price}')

else:

print('Failed to find the stock price element')

```

In this example, we use `BeautifulSoup` to parse the HTML content and find the `div` element with the class `YMlKec fxKbKc`, which contains the stock price. If the element is found, we extract and print the stock price.

How to Handle Different Types of Financial Data

In addition to current stock prices, extracting historical data from Google Finance can provide valuable insights for trend analysis and investment decisions. Using Python and BeautifulSoup, you can automate the process of fetching historical stock prices efficiently.

Here’s an example of how you can scrape historical data:

```python

import requests

from bs4 import BeautifulSoup

url = 'https://www.google.com/finance/quote/GOOGL:NASDAQ?sa=H'

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

historical_data_elements = soup.find_all('div', class_='historical-data-element-class')

historical_data = []

for element in historical_data_elements:

date = element.find('div', class_='date-class').text

close_price = element.find('div', class_='close-price-class').text

historical_data.append({'date': date, 'close_price': close_price})

print(historical_data)

```

This script retrieves historical stock prices for the “GOOGL” stock on NASDAQ from Google Finance. It parses the HTML content to find specific elements that contain the date and close price data. By collecting historical data, you can analyze past performance and identify patterns that may influence future stock movements.

How to Extract News Headlines

Google Finance also provides the latest news headlines related to a stock. Scraping these headlines can be useful for staying updated with the latest developments and market trends. Using Python and BeautifulSoup, you can easily collect these headlines for analysis.

Here’s how you can do it:

```python

import requests

from bs4 import BeautifulSoup

url = 'https://www.google.com/finance/quote/GOOGL:NASDAQ'

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

news_elements = soup.find_all('div', class_='news-element-class')

news_headlines = []

for element in news_elements:

headline = element.find('a', class_='headline-class').text

news_headlines.append(headline)

print(news_headlines)

```

This script sends a request to the Google Finance page for the stock “GOOGL” on NASDAQ, then uses BeautifulSoup to parse the HTML content. It looks for specific HTML elements that contain the news headlines and extracts the text. By doing this, you can keep track of important news that may affect stock prices.

Storing and Analyzing the Data

Once you’ve scraped data from Google Finance using Python, you’ll want to save it for later analysis. A common and easy-to-use format for storing tabular data is CSV (Comma-Separated Values). Saving your data in a CSV file makes it simple to share and import into various data analysis tools.

Saving data to a CSV file in Python is straightforward, especially if you use the Pandas library. Pandas provide powerful tools for handling and storing data efficiently. This method ensures your financial data is well-organized and easily accessible for future use.

Here’s a simple example of how to save stock prices to a CSV file using Pandas:

import pandas as pd

# Assume data is stored in a Pandas DataFrame

data = pd.DataFrame({

'Date': ['2023-06-01', '2023-06-02', '2023-06-03'],

'Stock Price': [1500, 1520, 1490]

})

# Save the DataFrame to a CSV file

data.to_csv('stock_prices.csv', index=False)

In this example, we create a DataFrame with stock prices and their corresponding dates. We then use the to_csv method to save the DataFrame to a CSV file named stock_prices.csv. The index=False argument ensures that the DataFrame’s index is not written to the file, keeping the CSV clean and easy to read.

Using CSV files to store your scraped data has several advantages. They are lightweight, widely supported, and can be opened with any text editor or spreadsheet software like Microsoft Excel or Google Sheets. This flexibility allows you to easily share your data with others or use it in various data analysis tools.

Integrating primary and secondary keywords naturally into this process helps optimize your content for search engines, ensuring that others looking to perform similar tasks can find and benefit from your work. This way, your Python and Google Finance projects remain not only efficient but also accessible to a broader audience. In this example, we use Python’s built-in `csv` module to write the historical data to a CSV file.

Saving Data to a Database

For more advanced data storage and querying, you might want to save the data to a database. SQLite is a lightweight, file-based database that is easy to use with Python. This method allows you to efficiently store, query, and manage large amounts of financial data.

Try Our Web Scraping API

The ideal tool for effective, fast web scraping.

Here’s how you can save the stock prices to an SQLite database:

import sqlite3

# Connect to the SQLite database (or create it if it doesn't exist)

conn = sqlite3.connect('stock_data.db')

cursor = conn.cursor()

# Create a new table for storing stock prices if it doesn't already exist

cursor.execute('''

CREATE TABLE IF NOT EXISTS stock_prices (

date TEXT,

close_price REAL

)

''')

# Insert historical data into the table

historical_data = [

{'date': '2023-06-01', 'close_price': 1500},

{'date': '2023-06-02', 'close_price': 1520},

{'date': '2023-06-03', 'close_price': 1490}

]

for data in historical_data:

cursor.execute('''

INSERT INTO stock_prices (date, close_price)

VALUES (?, ?)

''', (data['date'], data['close_price']))

# Commit the changes and close the connection

conn.commit()

conn.close()

print('Data saved to stock_data.db')

In this example, we connect to an SQLite database named stock_data.db. We create a table called stock_prices with columns for the date and closing price. We then insert historical stock data into this table. Finally, we commit the changes and close the database connection.

Using a database like SQLite offers several advantages over CSV files. Databases are designed for efficient data storage and retrieval, making them ideal for handling large datasets. They also support complex queries, allowing you to perform advanced analysis directly within the database.

Analyzing Data with Pandas

Pandas is an essential library in Python that makes data analysis and manipulation much easier. Think of it as a powerful toolbox for handling data, especially when working with financial information from sources like Google Finance. Pandas allows you to organize, filter, and perform complex calculations on your data efficiently. It’s particularly useful for transforming messy data into a structured format that’s easy to analyze.

With Pandas, you can effortlessly load data from various formats, including CSV, Excel, and even directly from the web. Once your data is loaded, Pandas provides a wide range of functions to clean, transform, and analyze it. For instance, you can remove duplicate entries, fill in missing values, and perform statistical analysis with just a few lines of code.

One of Pandas’ most powerful features is the DataFrame, a two-dimensional, size-mutable, and potentially heterogeneous tabular data structure with labeled axes (rows and columns). This structure allows you to access and manipulate data quickly and intuitively. For example, if you’re working with stock data, you can easily calculate the average price, filter data for specific dates, or even merge multiple datasets for a comprehensive analysis.

Here’s a simple example of how you can use Pandas for advanced data analysis with data from Google Finance:

import pandas as pd

# Load data into a Pandas DataFrame

data = pd.read_csv('google_finance_data.csv')

# Display the first few rows of the DataFrame

print(data.head())

# Calculate the average stock price

average_price = data['Stock Price'].mean()

print(f"Average Stock Price: {average_price}")

# Filter data for a specific date range

filtered_data = data[(data['Date'] >= '2023-01-01') & (data['Date'] <= '2023-12-31')]

print(filtered_data)

# Merge multiple datasets

additional_data = pd.read_csv('additional_finance_data.csv')

merged_data = pd.merge(data, additional_data, on='Date')

print(merged_data).

Advanced-Data Extraction Techniques

Sometimes, the information on Google Finance is loaded using JavaScript, which makes it hard to get with just `requests` and `BeautifulSoup`. In these cases, you can use a tool called Selenium. Selenium is great for web scraping because it can handle dynamic content that changes after the page loads. It acts like a real user clicking around the website.

Here’s a simple way to use Selenium to get Google Finance data using Python and handle JavaScript-loaded content efficiently:

from selenium import webdriver

from bs4 import BeautifulSoup

# Initialize Chrome WebDriver

driver = webdriver.Chrome()

# Load the Google Finance page for GOOGL stock

driver.get('https://www.google.com/finance/quote/GOOGL:NASDAQ')

# Get the page source (HTML content)

html_content = driver.page_source

# Close the WebDriver

driver.quit()

# Parse the HTML content with BeautifulSoup

soup = BeautifulSoup(html_content, 'html.parser')

# Extract the stock price

stock_price = soup.find('span', class_='YMlKec fxKbKc').text

# Print the extracted stock price

print("Stock Price:", stock_price)

Extracting Data from Tables and Complex Structures

Use the BeautifulSoup library in Python to easily get data from tables on Google Finance web pages. This makes it simple to collect and understand the financial information you need. By using BeautifulSoup, you can efficiently gather and analyze Google Finance data with Python, making your data collection process smooth and effective. For a step-by-step guide on extracting Google Finance data using Python and BeautifulSoup, refer to our comprehensive tutorial.

# Assuming 'soup' and other necessary setup for BeautifulSoup are defined

# Find the table element

table = soup.find('table', class_='W(100%) M(0)')

# Check if table is found before proceeding

if table:

# Find all rows in the table

rows = table.find_all('tr')

# Check if rows are found before iterating

if rows:

# Iterate through each row

for row in rows:

# Find all cells in the row

cells = row.find_all('td')

# Iterate through each cell in the row and print its text

for cell in cells:

print(cell.text.strip()) # Use strip() to remove leading/trailing whitespace

else:

print("No rows found in the table")

else:

print("Table not found on the page")

Automating the Script with Scheduling

You might want to run your web scraping script for Google Finance data automatically at regular intervals to keep your data up-to-date. You can use tools like `cron` on Unix-based systems or Task Scheduler on Windows to schedule your script. Set up cron jobs to automatically run your Python scripts to fetch Google Finance data at scheduled times. This means you don’t have to manually start your scripts every time you want to collect financial data.

“`bash

crontab -e

0 0 * * * /usr/bin/python3 /path/to/your_script.py

“`

Using Task Scheduler on Windows

Automate your Python scripts for fetching Google Finance data using Task Scheduler on Windows. This tool allows you to set specific times for your scripts to run, ensuring your data is always current without manual intervention. For example, you can configure Task Scheduler to run your script every morning at 9 AM. By automating your script execution with Task Scheduler, you make the process of collecting Google Finance data with Python more efficient and reliable. This method is especially useful for maintaining up-to-date financial information for analysis and decision-making:

- Open Task Scheduler and select “Create Basic Task”.

- Configure the task to run daily at midnight, executing your Python script.

Best Practices and Ethical Considerations

Adhere to website terms of service when conducting web scraping activities, especially when using Python to scrape Google Finance data. Ensuring compliance is crucial to avoid legal repercussions. Ethical scraping practices not only protect you legally but also respect the website’s policies.

Try Our Web Scraping API

The ideal tool for effective, fast web scraping.

Implement rate limiting and request randomization when using Python to scrape Google Finance data. These techniques help minimize server load and uphold ethical scraping standards. By placing your requests and varying their timing, you avoid overwhelming the server and reduce the risk of being blocked.

Conclusion

Using Python to scrape Google Finance can greatly enhance your ability to collect and analyze financial data. By automating the data collection process, you can save time and ensure that you always have the most up-to-date information. Whether you’re interested in current stock prices, historical data, or the latest news headlines, Python offers powerful tools to help you get the data you need.

We hope you found our tutorial on how to use Python to scrape Google Finance data helpful. If you have any questions or suggestions for future topics, feel free to contact us. We carefully review all inquiries and are eager to cover subjects that interest our readers!

The information contained within this article, including information posted by official staff, guest-submitted material, message board postings, or other third-party material is presented solely for the purposes of education and furtherance of the knowledge of the reader. All trademarks used in this publication are hereby acknowledged as the property of their respective owners.