Best Methods For Collecting Qualitative Data

With over 328 million terabytes of data generated daily, there’s almost no limit to the amount of information out there, waiting to be collected, wrangled, and probed to answer nearly any question you can imagine. Despite the vast amounts of data available, it all falls into one of two categories: quantitative or qualitative.

If you want to collect data — whether to drive business insights, better understand a challenge, or simply satisfy your curiosity — you need to know the best methods to collect qualitative data and quantitative data. While both types are valuable, the strategies you’ll use to collect them are different.

Quantitative vs. Qualitative Data

Before we go into the data collection methods, let’s clarify the differences between qualitative and quantitative data.

Quantitative data

Quantitative data can be measured and expressed in numbers. This data type is based on observations, measurements, or computations and can be analyzed using mathematical or statistical techniques.

Quantitative data is the data you probably think of when you hear the word “data.” It’s all about the numbers — how many, how long, how heavy. Anything that can be counted is quantitative data, including age, height, weight, temperature, income, test scores, and more.

If you want to know how many people are visiting your website or how many widgets you sold last year, you’ll use quantitative data. A good rule of thumb is that if you can answer a question with a number, it’s quantitative data.

You can analyze quantitative data using various statistical techniques, including descriptive statistics, inferential statistics, regression analysis, and hypothesis testing. Quantitative data is frequently used in economics, finance, science, engineering, and social sciences to make informed conclusions based on empirical evidence.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

Qualitative data

Sometimes, you want to go beyond the numbers to gather insights from people’s experiences. You need qualitative data. This type of data is descriptive. How do your customers feel about their interactions with you? What’s their favorite feature of your new product? With qualitative data, you get a narrative rather than isolated numbers and can learn about people’s attitudes, beliefs, and behaviors in specific situations.

Qualitative data methods of data collection can include analyzing experiences people have shared on blogs, review sites, forums, and social media. Online reviews are a great example of qualitative data.

You’ll also analyze qualitative data differently. Instead of using statistical software to analyze the data and derive numerical summaries and relationships between variables, you’ll code it and analyze the themes revealed.

Coding involves systematically categorizing different data segments into categories that reflect the participants’ underlying concepts or ideas. You can then use software programs like NVivo or Atlas.ti to expedite this step.

An Overview of Quantitative and Qualitative Data Collection Methods

The difference between qualitative and quantitative data collection methods is substantial. Quantitative data collection methods are highly structured, using standardized instruments to collect data systematically. These methods typically involve surveys, questionnaires, or experiments, which seek to collect numerical data that can be analyzed statistically.

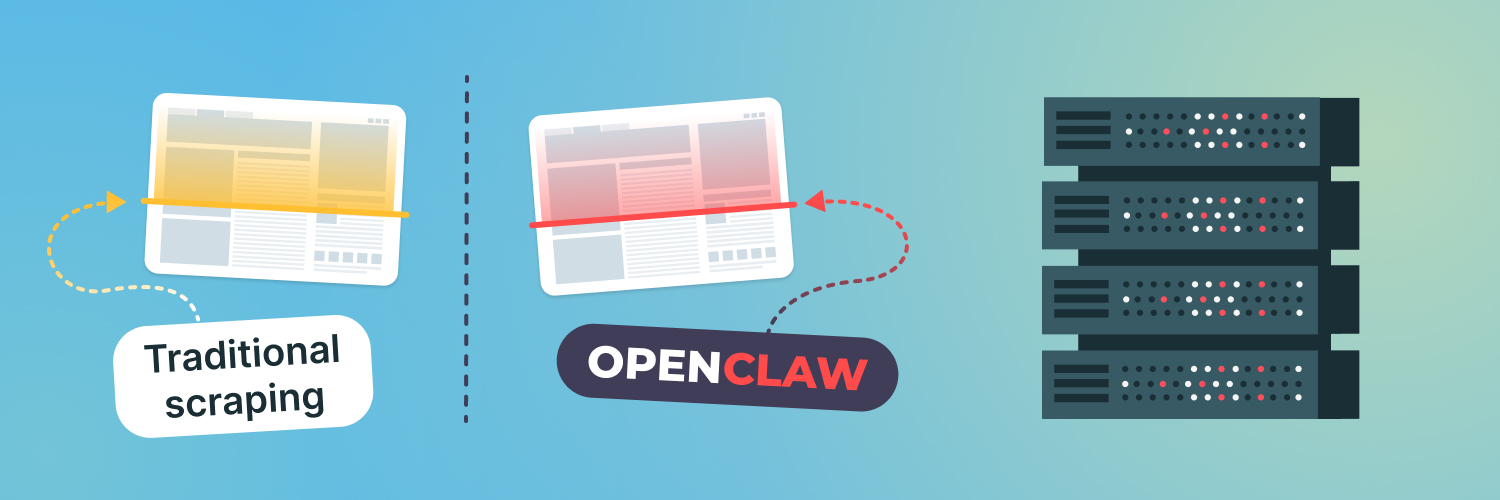

Although data collection methods for qualitative and quantitative research both follow specific protocols, qualitative data collection is primarily done via web scraping. Web scraping is a method of data collection that involves extracting information from websites. You can use web scraping techniques to gather large amounts of text-based data that can be analyzed for themes, concepts, and narratives. The data may include social media posts, reviews, comments, blog posts, and more.

Benefits of Collecting Qualitative Data Via the Internet

Traditional data collection methods of qualitative research involved focus groups, interviews, live observation, or examining secondary sources such as old diaries and newspapers — all of which are time-consuming, expensive, and not always reliable. Collecting qualitative data via web scraping often yields information that’s far more valuable to modern businesses. You can get up-to-the-minute data for product research, customer sentiment, brand recognition, and many other business uses. Some of the benefits of new methods used for qualitative data collection include the following.

Increased access to information

The internet provides instant access to a deep well of information on almost any topic. From official websites maintained by authoritative institutions to personal blogs, you can find sources for any data you can imagine. Web scraping allows you to tap into this vast resource in a structured manner, gathering qualitative data that can provide rich insights. This could include everything from public opinion on a certain topic, customer reviews, and feedback to detailed narratives and experiences shared online. Web scraping provides more diverse and extensive data than you can access elsewhere or collect manually.

Easy searchability

You can quickly find data using a standard internet search or advanced features like filters and Boolean operators to refine your searches. It only takes a few minutes to learn advanced techniques that professional researchers use to locate targeted data. Web scraping tools often come with features that allow you to specify exactly what kind of data you’re looking for, making the search process highly efficient. This could involve specifying certain keywords, tags, or other identifiers. Some tools, such as Rayobyte’s Web Scraping API, even allow you to program your own criteria, ensuring that the data you collect is highly relevant to your research question. The automation of this process saves time and increases the precision of the data collection process.

Wide range of sources

One of the key benefits of web scraping for qualitative data collection is the sheer diversity of sources it can tap into. From social media platforms to online forums, blogs, news sites, and more, web scraping can collect data from a wide range of perspectives and contexts. This can enrich your research and provide a more comprehensive understanding of your research topic. You can even set up automated alerts that notify you whenever your brand is mentioned in any context. So, you aren’t limited to pre-defined sources.

Reduced cost

Collecting data from the internet is much cheaper than any other method used for qualitative data collection. While you may need to invest in tools like web scrapers and proxies, it’s far more affordable than primary data collection methods. Manually collecting data, whether online or offline, is labor-intensive. It requires a lot of time and effort, which translates into higher costs if you need to hire personnel to do the work. With web scraping, the process is largely automated, reducing the need for human intervention and thereby cutting labor costs.

Time efficiency

Time is money. The speed and efficiency of web scraping mean that you can collect and analyze data much more quickly than with traditional methods. This not only reduces costs but also allows for more agile decision-making. If the data reveals a need for changes in strategy or operations, those changes can be implemented sooner, potentially saving or making money.

Multiple use cases

Whether you want to know which color your target audience likes best, what product you should roll out next, or if using Gen Z slang attracts or alienates your customers, you can find the answer by collecting internet data. There’s no question so profound or so frivolous that you can’t find a rich pool of data for it somewhere on the internet.

Some of the most common business use cases for secondary data analysis include the following.

Competitor analysis

By collecting qualitative data, you can gain insights into your competitors’ strategies and customer perceptions. Scraping product reviews, customer comments, or forum discussions can help you understand what customers appreciate or dislike about your competitors’ offerings. This knowledge can guide you in refining your own products or services or even identifying market gaps to exploit.

Market research

Qualitative data is essential for understanding your customers’ behaviors, preferences, needs, and pain points. Through web scraping, you can gather this data from sources like social media platforms, review sites, and forums, giving you a deeper understanding of your target market. This can inform your product development, marketing strategies, and business planning.

Lead generation

Collecting qualitative data can help you identify potential leads by understanding the needs and preferences of different customer segments. For example, scraping data from industry forums or discussion boards can reveal individuals or businesses expressing a need that your product or service can fulfill.

Brand monitoring

By using web scraping to track mentions of your brand across the internet, and analyzing the qualitative data from these mentions, you can gain a clearer understanding of your brand’s perception. This allows you to respond to negative comments, engage with positive ones, and effectively manage your brand reputation.

Content creation ideas

Analyzing qualitative data from social media, blogs, and other platforms can provide insights into what kind of content resonates with your target audience. This can guide you in creating engaging and relevant content that meets the needs and interests of your audience.

Customer support

Web scraping can be used to collect customer complaints, queries, and feedback from various online sources. By analyzing this qualitative data, you can identify common issues and improve your products or services and customer support. It can also help you respond more quickly to customer issues, thereby improving customer satisfaction.

Emerging trends

Qualitative data can help you identify new trends and opportunities in the market. By scraping and analyzing data from social media, news sites, blogs, and other platforms, you can stay ahead of the curve and adapt your strategies to changing market conditions.

Steps To Analyzing Qualitative Data

You’ll need to follow a structured approach to collecting and analyzing qualitative data to extract the most value.

Clarify your research objectives

Before you do anything else, you need to know what your goal is. What do you want to find out from your data? Do you want to do market research for a new product or analyze customer sentiment about a social issue? You’ll get lost in the ocean of internet data without a clearly defined goal.

Identify sources

Although the internet is a vast and rich source of almost every type of data, it’s also full of wrong, misleading, and irrelevant data. There are troll farms and bot factories that exist solely to generate bad data and misinformation. You want to make sure you’re getting your data from reliable sources so that you can draw reliable conclusions from it.

In computer science, the phrase “garbage in, garbage out” means that poor-quality input will result in poor-quality output. Your research is only as good as your data.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

However, that doesn’t mean all your data must come from serious academic sources. Scraping online review sites can give you a lot of information about popular product features. Following a relevant hashtag on social media can provide insight into how your ideal customer feels about the latest trend.

Think about where your ideal customer hangs out on the internet. Do they participate in social media? Follow certain blogs? Submit reviews to an online store? The places your ideal customer frequents are good starting points for collecting data.

Here are some examples of good places to find qualitative data for various use cases.

Competitor analysis

- Company websites and blogs: Analyzing content, product descriptions, and news updates can provide insight into a competitor’s strategies and upcoming offerings.

- Review websites: These sites contain customer reviews of products and services, which can reveal strengths and weaknesses in a competitor’s offerings.

- Social media platforms: Businesses often share updates and engage with customers on these platforms. You can gain insights into a competitor’s customer engagement strategies and customer sentiment toward their brand.

Market research

- Discussion forums: These platforms contain a wealth of user-generated content, offering unfiltered opinions and discussions about a wide variety of topics.

- Industry-specific blogs and news sites: These can provide insights into market trends, industry news, and expert opinions.

- Social media platforms: They are particularly useful for understanding customer preferences and sentiment, as well as identifying trending topics.

Lead generation

- Professional networking sites: These platforms can provide information about potential B2B (business to business) clients, including company size, industry, and key personnel.

- Industry forums and directories: These can provide contact information and details about potential leads in specific industries.

Brand Monitoring

- Social media platforms: These are crucial for tracking mentions of your brand and understanding customer sentiment.

- Review websites: These sites can provide feedback about your products or services.

- News websites: They can help you monitor any media coverage of your brand.

Content creation ideas

- Content aggregation sites: These sites can help identify popular content in your industry or niche.

- Social media platforms: Trending topics and popular posts can provide inspiration for your own content.

Customer support

- Review websites: These sites can highlight common issues or complaints about your products or services.

- Your own social media pages: Customers often use these platforms to ask questions or report issues.

Emerging trends

- News websites and industry blogs: These are useful for staying up-to-date with industry trends and news.

- Social media platforms: They are great for identifying trending topics and understanding what is currently capturing public interest.

Collect data

Once you’ve identified a good source of the data, you can start collecting it. You can do this manually by visiting websites and keeping a spreadsheet, but why would you? You can automate the process using a web scraper and get the data you need in minutes.

Web scraping can be a bit technical. So, we’ll go into more detail in the next section. But briefly, a software script — or bot — searches through a website’s structure to extract the data you need and export it to a usable format, such as a CSV or JSON file.

Clean and organize data

Once you have your data in a usable format, you’ll need to clean it up by removing bad data, such as erroneous, inaccurate, or duplicate information. You’ll also need to process it for analysis using data processing tools such as Excel or Python.

Analyze the data

You’ll use techniques such as text mining or machine learning to analyze qualitative data. Identify keywords, phrases, or sentiments that correlate with the themes you’re investigating.

Categorize your data based on themes to identify key areas related to your topic. Once you have categorized your data, you can identify trends and patterns that will tell you what you want to know.

Share your results

After you’ve analyzed your data and mined it for insights, you’ll want to share your conclusions with stakeholders and others in your company who can benefit from it. You can use data visualization tools such as Tableau or stick with the data visualization tools in your spreadsheet software.

How To Scrape Qualitative Data

Scraping data is by far one of the most efficient and cost-effective examples of data collection methods in qualitative research. Web scrapers are widely available at various price points. If you want to start slow and simple, you can go with a basic Chrome browser extension. But if you want to put your technical chops to the test or are looking for customized solutions for secondary qualitative data collection methods, it’s easy to build one yourself, even with limited coding skills.

Using a web scraper

You’ll need to tell your web scraper what data you want. You can do this by visiting your target website and locating an example of the data you want to collect. Find the website’s HTML structure by inspecting the source code. While this sounds complicated, it’s pretty straightforward. Your browser will have a way to access a website’s source code. For instance, the shortcut on Google Chrome is “Ctrl + U.”

Locate the data and its associated HTML attribute. That’s the instructions you’ll give your web scraper to tell it where to find your data. Once it identifies the data you want, it’ll extract and export it into a file where you can clean, organize, and analyze it. Start slow and small, and you’ll learn as you go.

Using proxies

In addition to a web scraper, you’ll need proxies. A proxy serves as an intermediary between your computer and the internet and hides your real IP address from the websites you’re visiting. A web scraper is a bot, and most websites don’t like bots — which is understandable. Some unethical people use them for nefarious purposes, and they can negatively affect a website’s performance. Websites often have anti-bot software that automatically detects bots and blocks their IP addresses, shutting them down.

One of the ways a website can tell an actual visitor from a bot is by how fast it sends requests. Because bots are so much faster than humans, they can send thousands of requests before a human can send one. If a website detects requests coming from an IP address at inhuman speeds, it blocks the IP address to shut down the bot.

Proxies make your scraper appear more human. If you use a rotating pool of proxies, like those available from Rayobyte, every request is sent with a different IP address — allowing your web scraper to escape detection.

However, there are some steps you should take to make sure you’re scraping ethically:

- Scrape during nonpeak hours.

- Slow down your scraper so that it won’t overwhelm servers.

- Don’t collect data you don’t need.

- Use an API if there’s one available, and it has the data you need.

Choosing a Proxy Provider

Because proxies are so important for modern types of qualitative data collection methods, choosing the right proxy provider is important. While there are free proxies available on the internet, there are serious risks associated with those. They expose you to cybersecurity risks and perform poorly because they’re so overloaded.

Rayobyte wants to be your data partner, not just your provider, and help you achieve all of your data-related goals. We set the industry standard for ethical proxy use. Our residential, ISP, mobile, and data center proxies are the best and fastest available on the market.

You’ll get 24/7 live customer support, a large proxy pool, and a 99.9% uptime guarantee for ultimate ban reduction. Reach out today to learn more.

Next Steps in Qualitative Data Collection

Qualitative data is more challenging to collect and analyze than quantitative data, but it can often provide deeper and more meaningful insights that can help your business succeed. Choosing the right data sources and using a web scraper and proxies to extract data will be much cheaper and more efficient than using traditional methods to collect qualitative data.

If you’re willing to put in the effort to collect and analyze qualitative data, you’ll be rewarded with information that can give you a competitive edge in today’s uncertain marketplace.

Scrape at Scale With Chromium Stealth Browser

Self-hosted, Linux-first, compatible with all automation frameworks.

The information contained within this article, including information posted by official staff, guest-submitted material, message board postings, or other third-party material is presented solely for the purposes of education and furtherance of the knowledge of the reader. All trademarks used in this publication are hereby acknowledged as the property of their respective owners.